Co-founder and Co-CEO, Yutori

News

- [Apr 24] Building Yutori to reimagine interaction with the web.

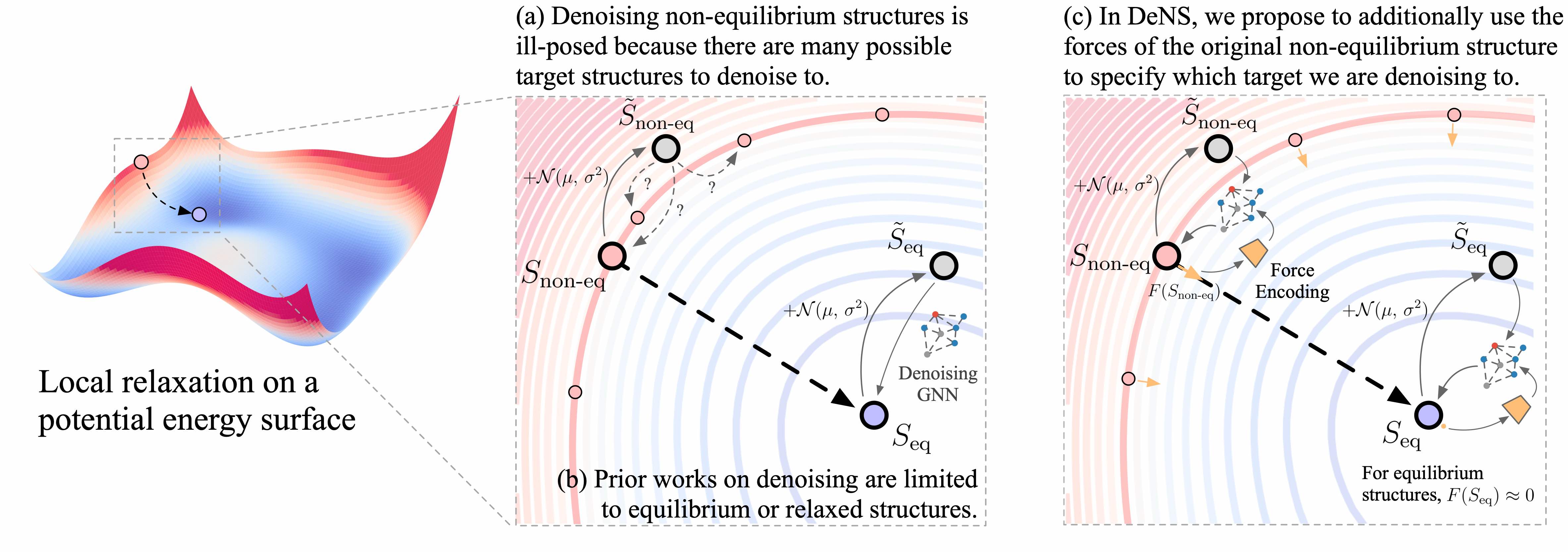

- [Mar 24] Introducing DeNS, a generalized denoising objective that enables the use of non-equilibrium (in addition to equilibrium) atomistic structures for training equivariant GNNs.

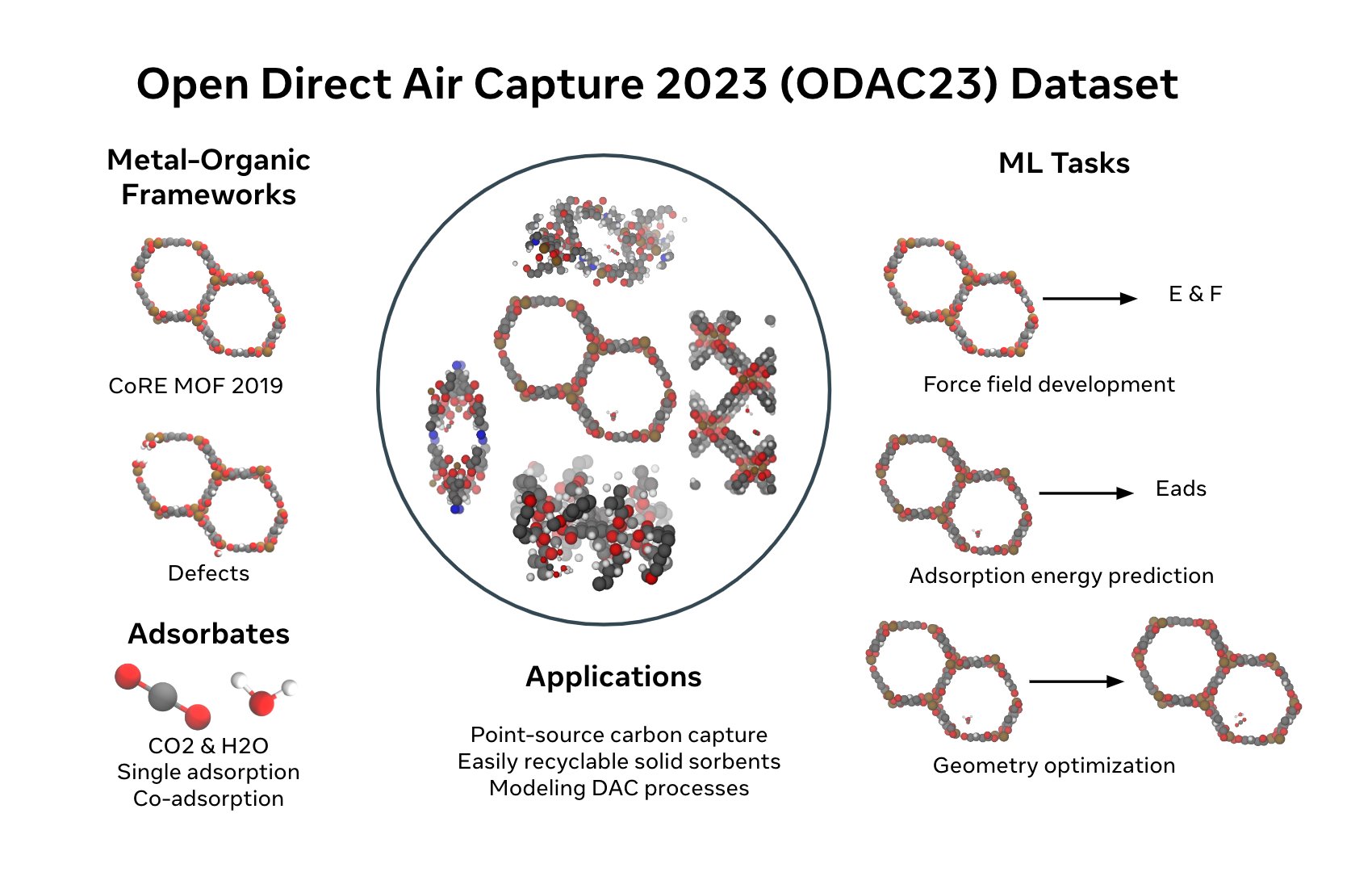

- [Nov 23] We released ODAC23, the largest dataset (to date) of DFT calculations on Metal–organic frameworks (MOFs) for Direct Air Capture applications, along with pretrained models.

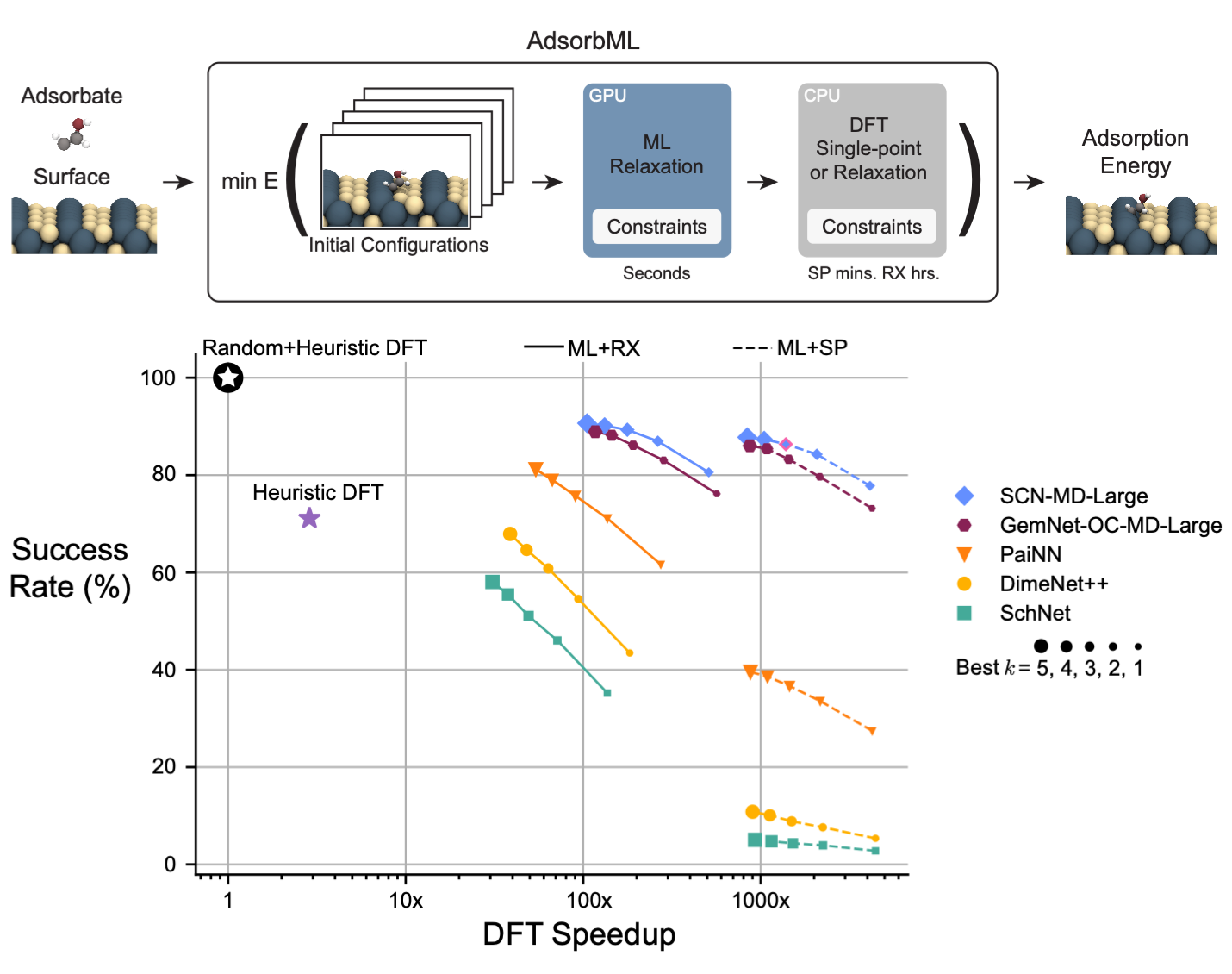

- [Oct 23] We released the AI-powered Open Catalyst demo and API, which can accelerate search for electrocatalysts by running structural relaxations ~1000x faster than DFT (tweet).

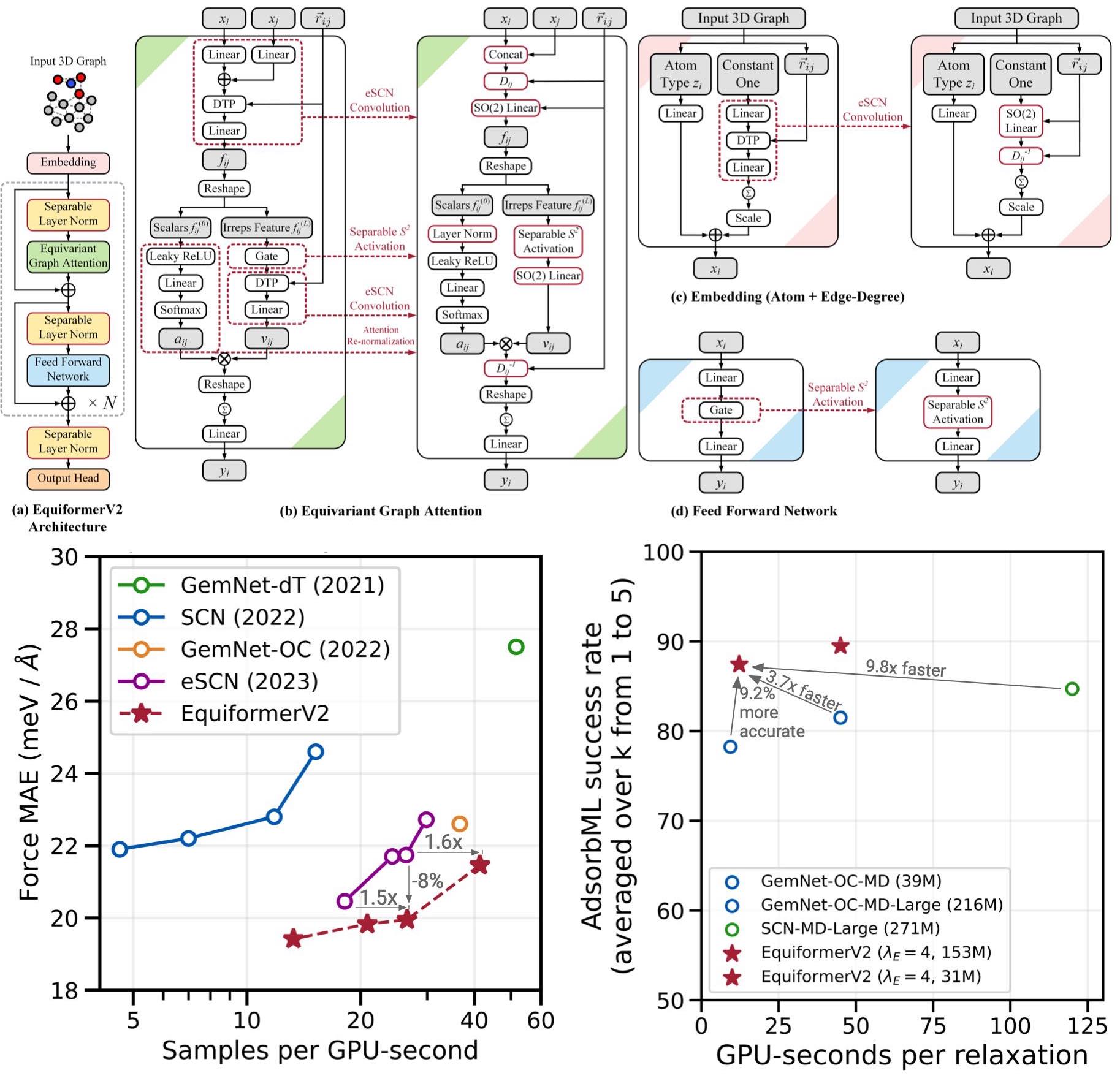

- [Jun 23] Introducing EquiformerV2, a new state-of-the-art GNN for atomistic modeling.

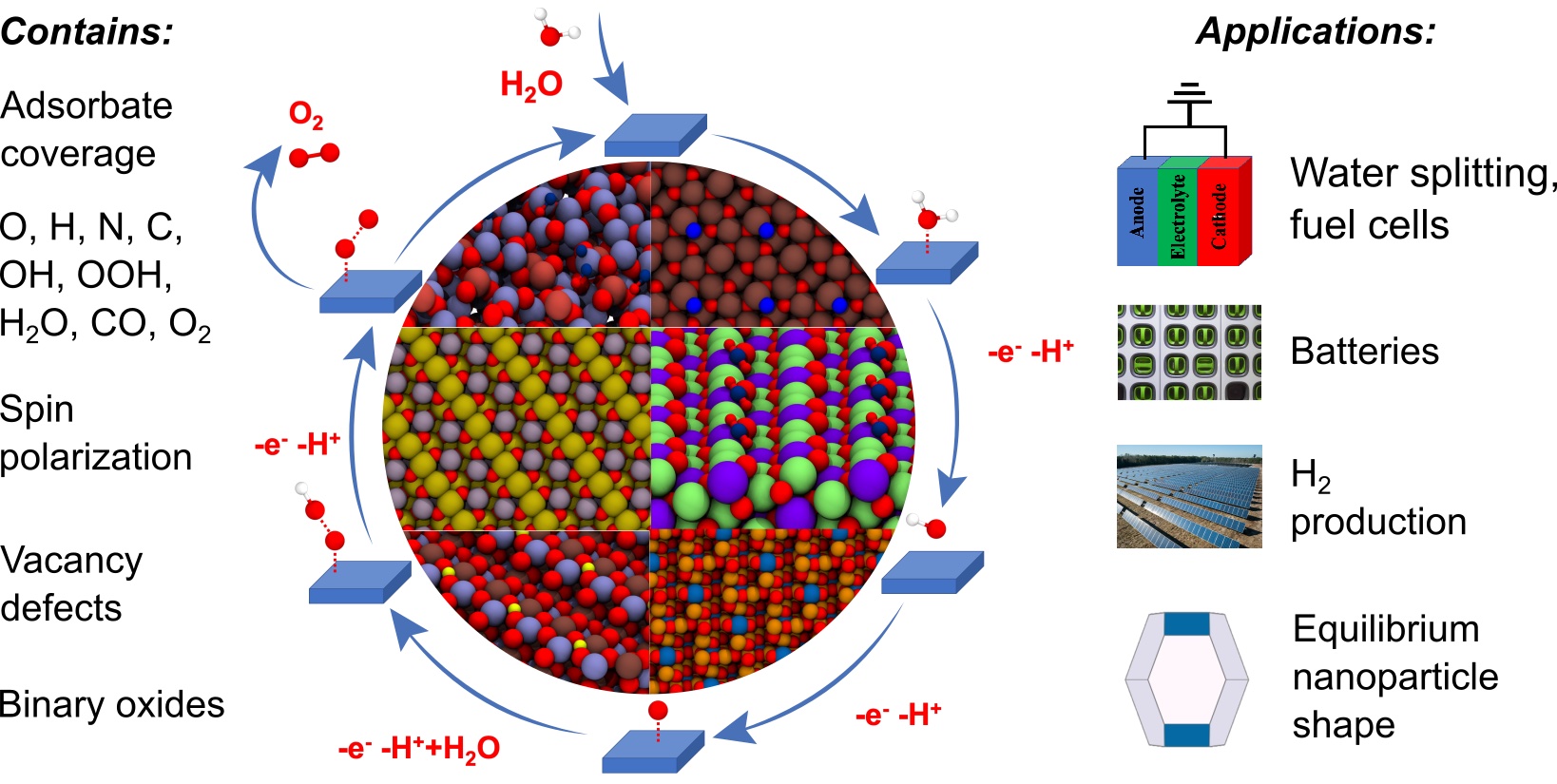

- [Jun 22] We released the OC22 dataset: ~10M DFT calculations for oxide electrocatalysis.

- [Feb 22] Runner-up for the 2020 AAAI/ACM SIGAI Doctoral Dissertation Award.

- [Mar 21] Awarded the Georgia Tech Sigma Xi Best PhD Thesis Award.

- [Mar 21] Awarded the Georgia Tech College of Computing Dissertation Award.

- [Nov 20] The Open Catalyst Project was covered by Fortune, Engadget, CNBC, VentureBeat.

- [Nov 20] Organizing the 4th Visually-Grounded Interaction & Language Workshop at NAACL.

- [July 20] Presenting Probing Emergent Semantics in Predictive Agents at ICML 2020 (Video).

- [Mar 20] I completed my PhD! My thesis, “Building agents that can see, talk, and act”, is here.

- [Nov 19] Organizing the Visual Question Answering and Dialog workshop at CVPR 2020.

- [Sep 19] Organizing the Visually-Grounded Interaction & Language Workshop at NeurIPS.

- [Jun 19] Presenting Targeted Multi-Agent Communication as an oral at ICML 2019 (Video).

- [Mar 19] Co-founded Caliper. Caliper helps recruiters evaluate practical AI skills.

- [Feb 19] My work was featured in this wonderful article by Georgia Tech.

- [Jan 19] Awarded the Facebook Graduate Fellowship.

- [Jan 19] Awarded the Microsoft Research PhD Fellowship (declined).

- [Jan 19] Awarded the NVIDIA Graduate Fellowship (declined).

- [Jan 19] Organizing the 2nd Visual Dialog Challenge.

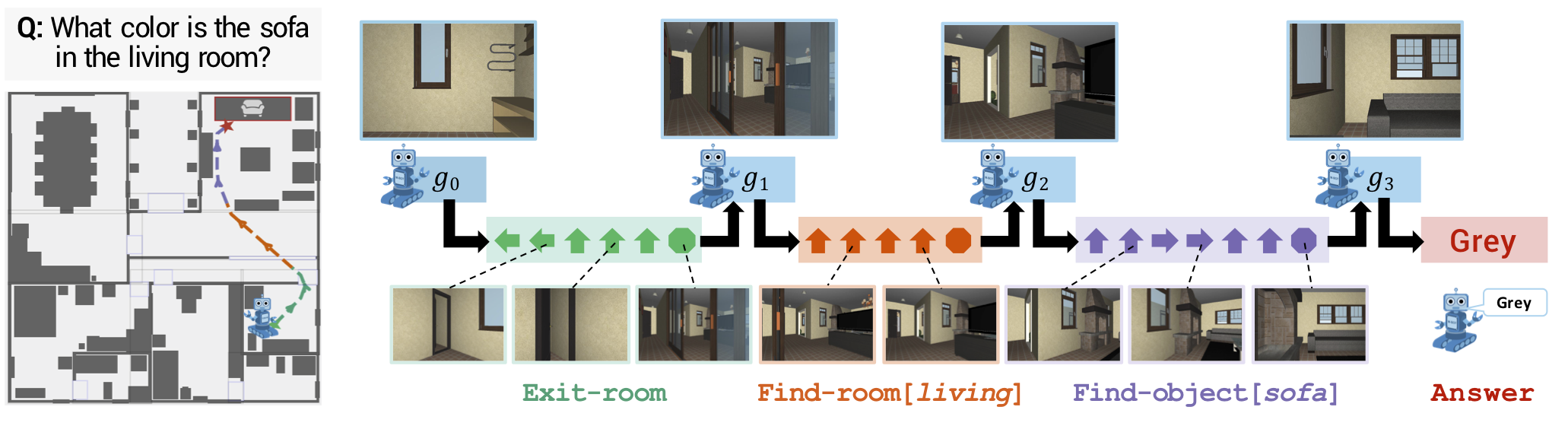

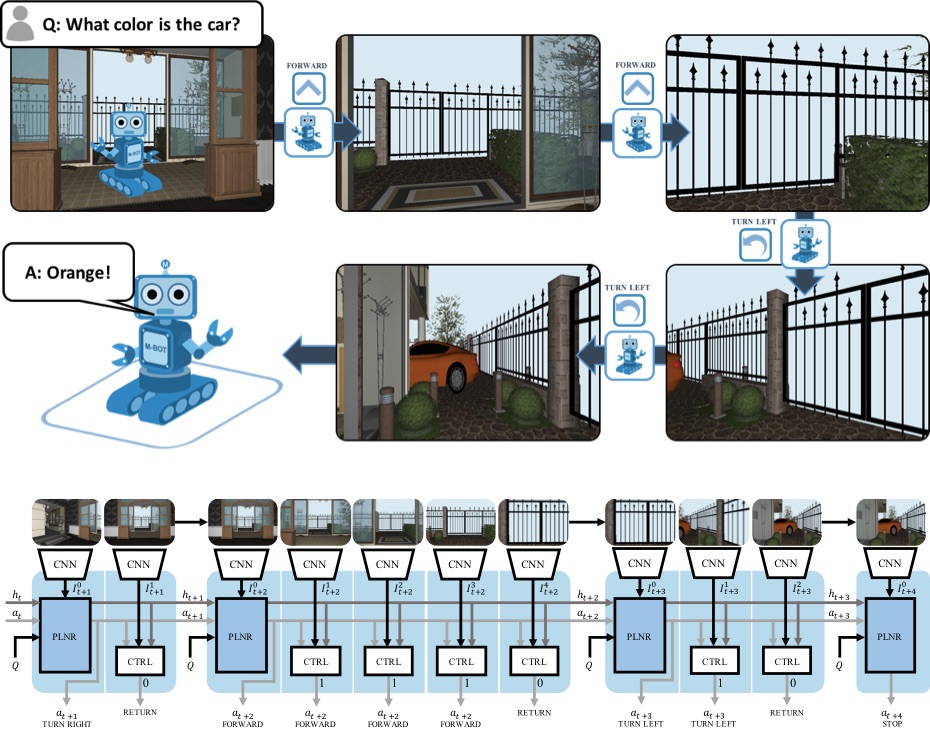

- [Oct 18] Presenting Neural Modular Control for Embodied QA at CoRL 2018 (Video).

- [Sep 18] Presenting results and analysis of the 1st Visual Dialog Challenge at ECCV 2018.

- [Jul 18] Presenting a tutorial on Connecting Language and Vision to Actions at ACL 2018.

- [Jun 18] Organizing the 1st Visual Dialog Challenge.

- [Jun 18] Presenting Embodied Question Answering as an oral at CVPR 2018 (Video).

- [Jun 18] Organizing the VQA Challenge and Visual Dialog Workshop at CVPR 2018.

- [Mar 18] Speaking on Embodied Question Answering at NVIDIA GTC (Video).

- [Dec 17] Awarded the Adobe Research Fellowship. (Department’s news story)

- [Dec 17] Awarded the Snap Inc. Research Fellowship. (Department’s news story)

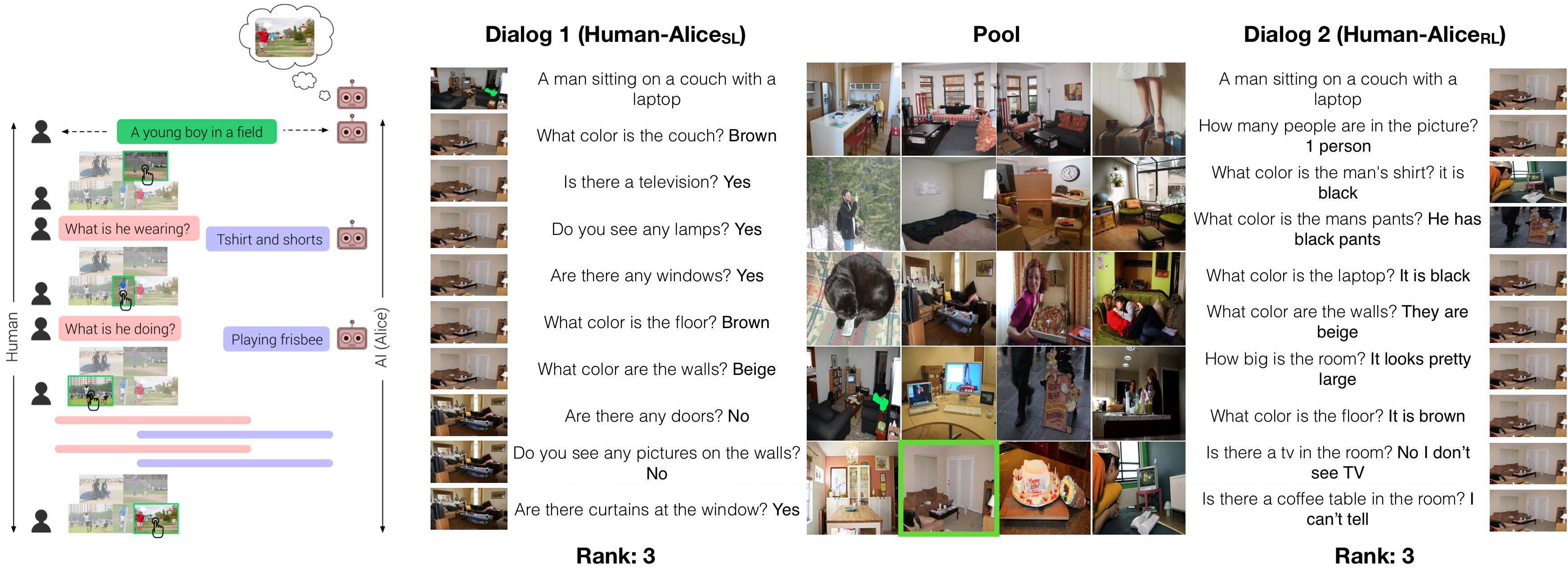

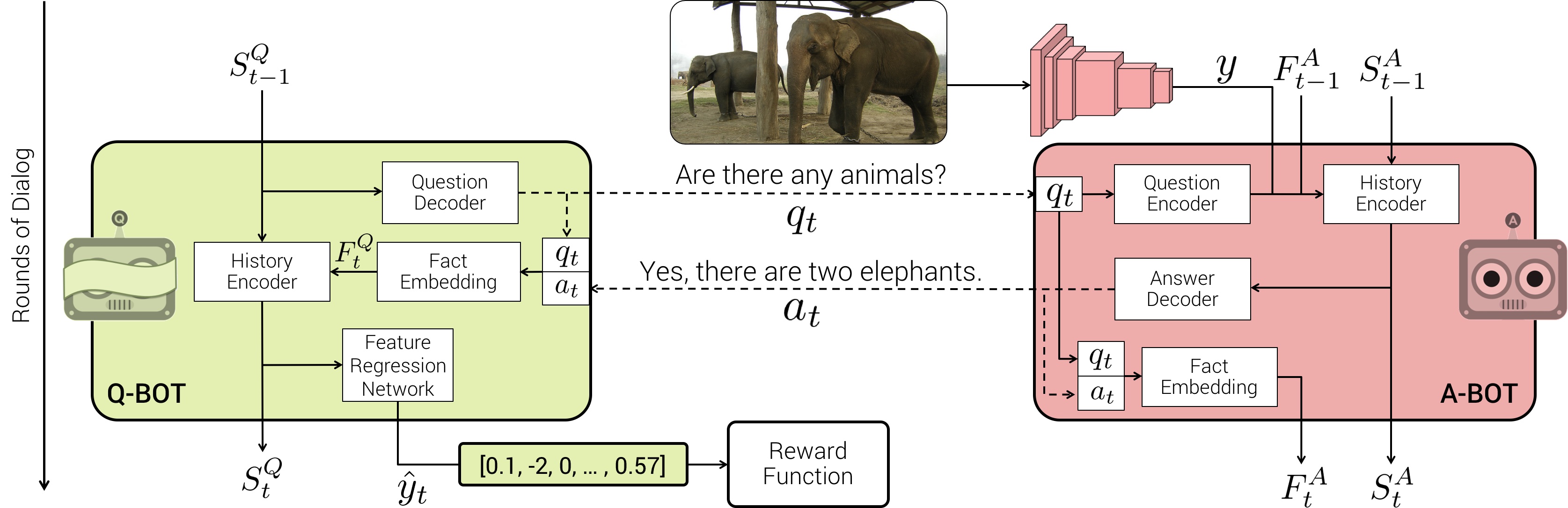

- [Oct 17] Presenting Cooperative Visual Dialog Agents as an oral at ICCV 2017 (Video).

- [Jul 17] Presenting Visual Dialog at the VQA Challenge Workshop, CVPR 2017 (Video).

- [Jul 17] Presenting our paper on Visual Dialog as a spotlight at CVPR 2017 (Video).

Bio

I am the Co-founder and Co-CEO of Yutori, where we’re building state-of-the-art web agents – AI agents that can reliably take actions and execute tasks on the web.

Previously, I was a Research Scientist at Fundamental AI Research (FAIR) at Meta working on deep neural networks and its applications in climate change, specifically focusing on electrocatalyst discovery for renewable energy storage as part of the Open Catalyst Project.

Before that, I was a Computer Science PhD student at Georgia Tech, advised by Dhruv Batra, and working closely with Devi Parikh, where I focused on developing artificial agents that can see (computer vision), talk (language modeling), and act (reinforcement learning).

During my PhD, I interned thrice at Facebook AI Research — Summer 2017 and Spring 2018 at Menlo Park, working with Georgia Gkioxari, Devi Parikh and Dhruv Batra on training embodied agents for navigation and question-answering in simulated environments (see embodiedqa.org), and Summer 2018 at Montréal, working with Mike Rabbat and Joelle Pineau on communication protocols in multi-agent reinforcement learning. In 2019, I interned at DeepMind in London working on grounded language learning with Felix Hill, Laura Rimell, and Stephen Clark, and at Tesla Autopilot in Palo Alto working on differentiable neural architecture search with Andrej Karpathy.

My PhD research was supported by fellowships from Facebook, Adobe, and Snap.

Prior to joining grad school, I worked on neural coding in zebrafish tectum as an intern under Prof. Geoffrey Goodhill and Lilach Avitan at the Goodhill Lab, Queensland Brain Institute.

I got my Bachelor’s at IIT Roorkee in 2015. During my undergrad, I took part in Google Summer of Code (2013 and 2014), won several competitions (Yahoo! HackU!, Microsoft Code.Fun.Do., Deloitte CCTC 2013 and 2014), and owe most of my programming/tinkering bent to SDSLabs.

On the side, I built aideadlin.es (countdowns to a bunch of CV/NLP/ML/AI conference deadlines) and aipaygrad.es (statistics of industry job offers in AI), neural-vqa and its extension neural-vqa-attention, HackFlowy, graf, Erdős, etc. I also occasionally dabble in generative art. I like this map tracking the places I’ve been to. Blog posts from a previous life.

Publications

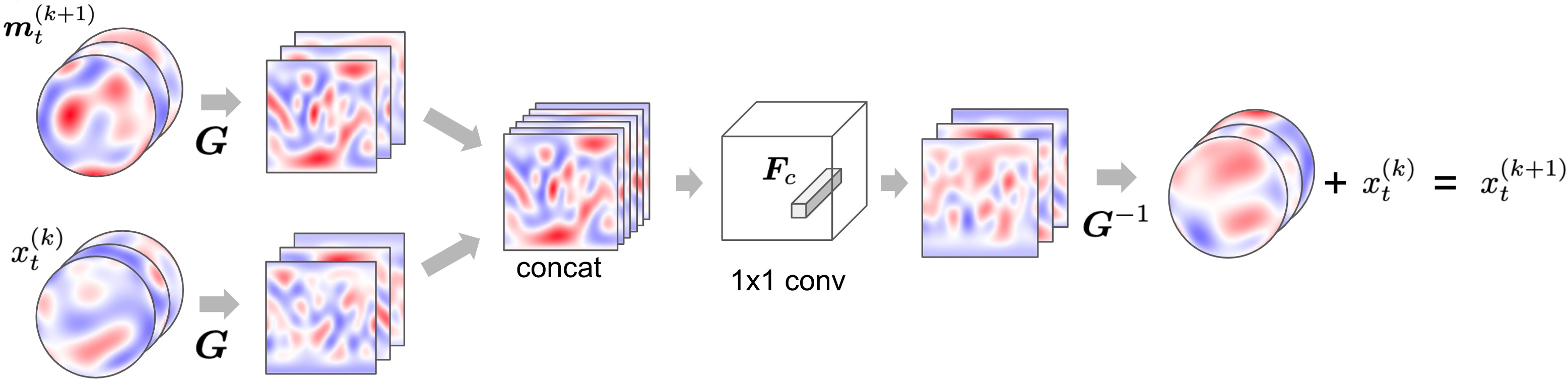

Generalizing Denoising to Non-Equilibrium Structures Improves Equivariant Force Fields

The Open DAC 2023 Dataset and Challenges for Sorbent Discovery in Direct Air Capture

EquiformerV2: Improved Equivariant Transformer for Scaling to Higher-Degree Representations

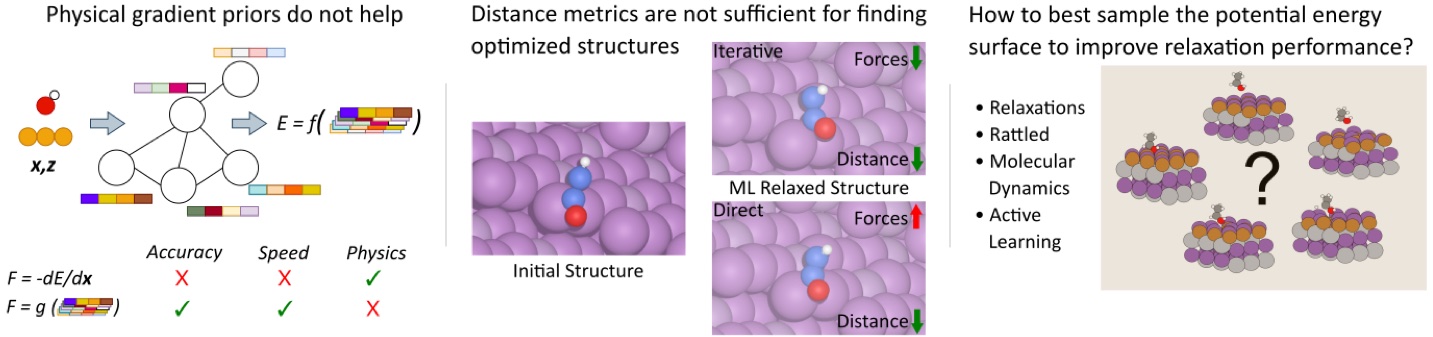

AdsorbML: Accelerating Adsorption Energy Calculations with Machine Learning

npj Computational Materials

Paper

Code

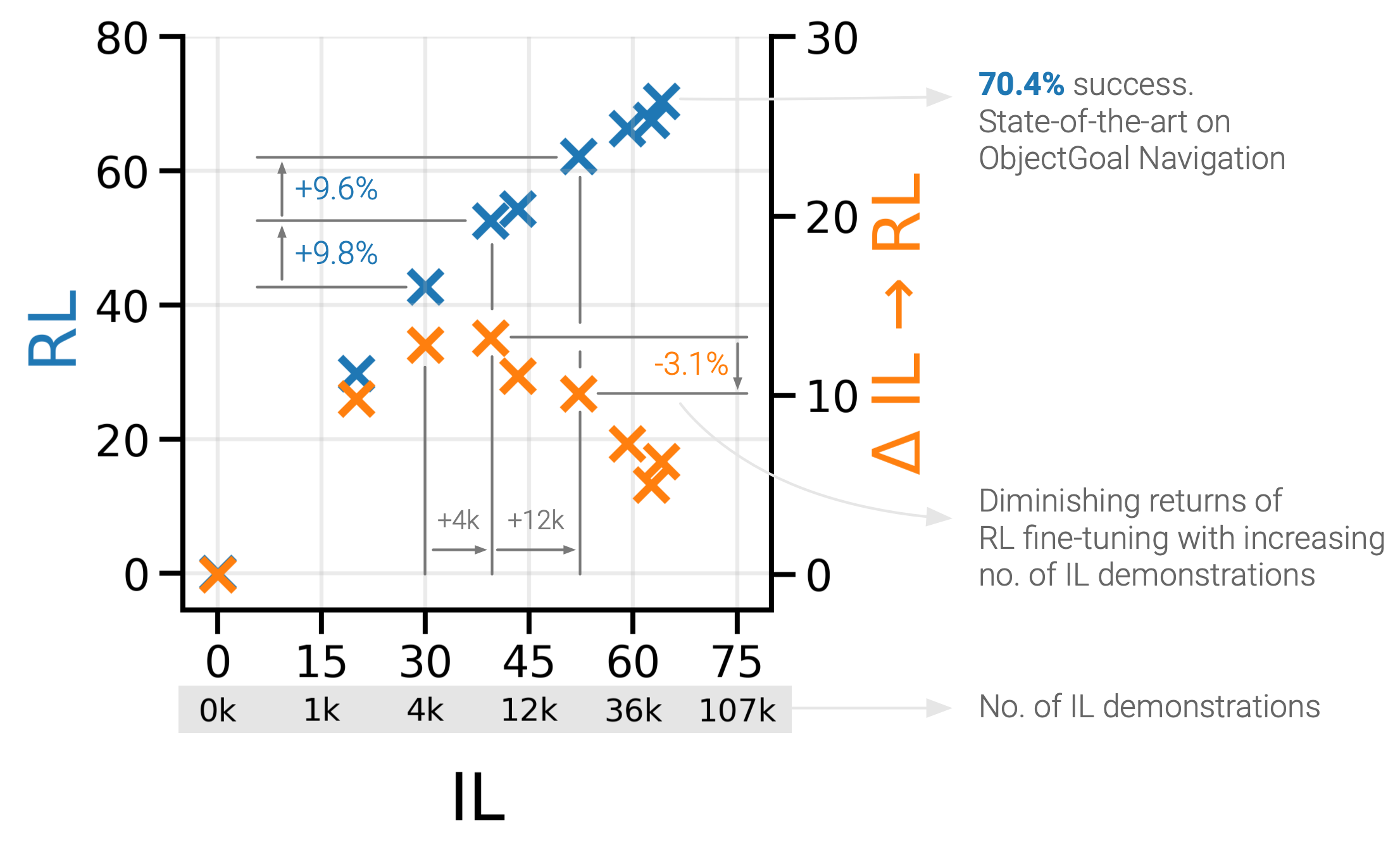

PIRLNav: Pretraining with Imitation and RL Finetuning for ObjectNav

CVPR 2023, RRL workshop at ICLR 2023

Paper

Code

Website

The Open Catalyst 2022 (OC22) Dataset and Challenges for Oxide Electrocatalysis

ACS Catalysis 2022

Paper

Code

Dataset

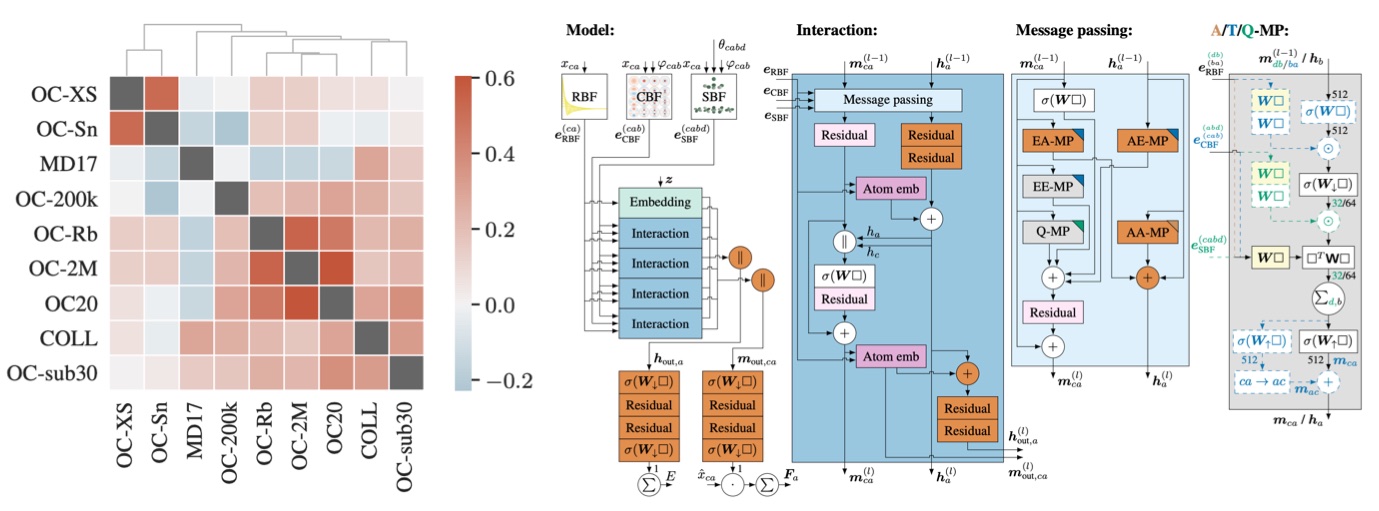

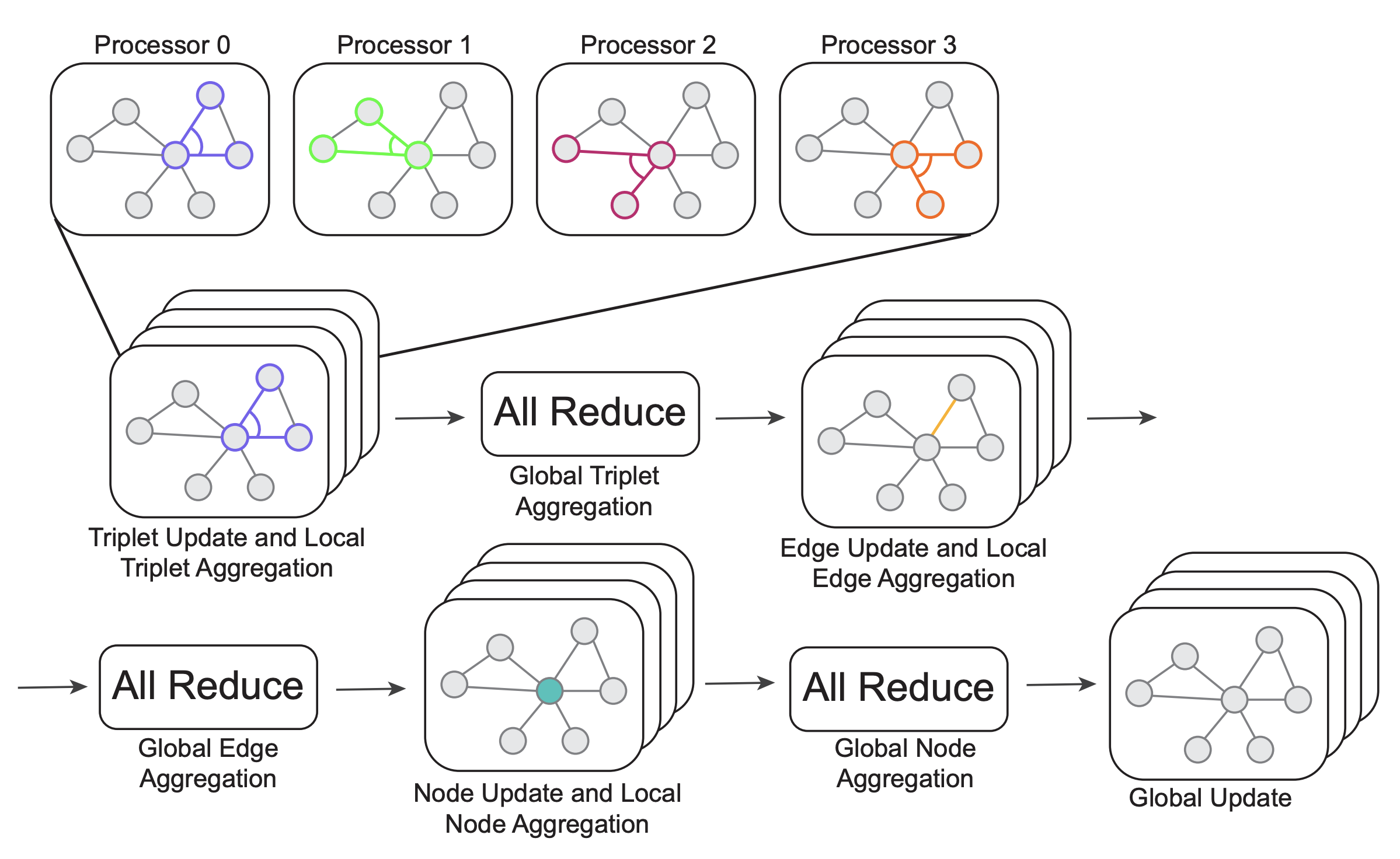

GemNet-OC: Developing Graph Neural Networks for Large and Diverse Molecular Simulation Datasets

Spherical Channels for Modeling Atomic Interactions

Open Challenges in Developing Generalizable Large Scale Machine Learning Models for Catalyst Discovery

ACS Catalysis (Perspective) 2022

Paper

Transfer learning using attentions across atomic systems with graph neural networks (TAAG)

The Journal of Chemical Physics 2022

Paper

Code

Habitat-Web: Learning Embodied Object-Search Strategies from Human Demonstrations at Scale

CVPR 2022

Paper

Code

Website

Presentation video

Towards Training Billion Parameter Graph Neural Networks for Atomic Simulations

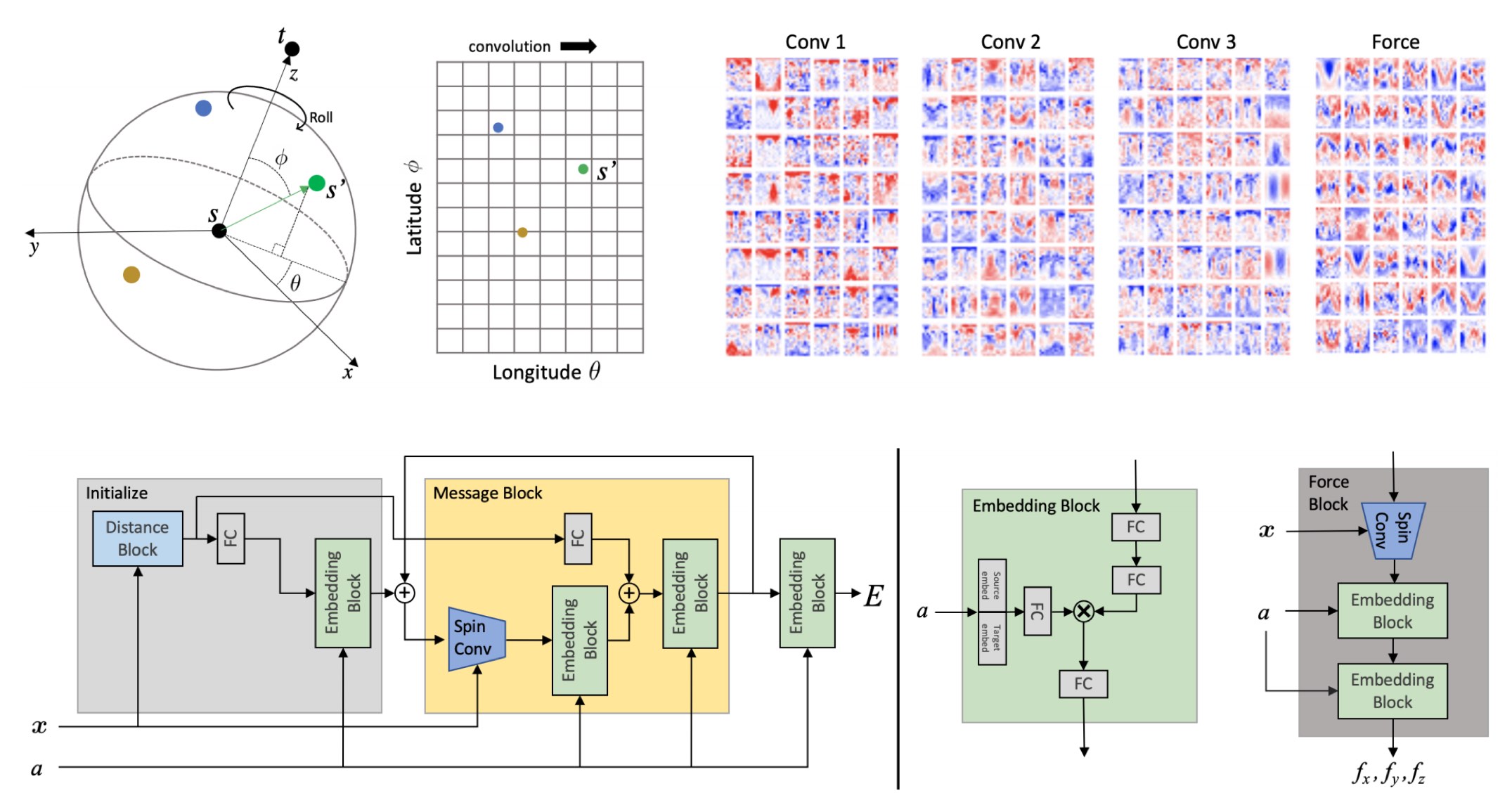

Rotation Invariant Graph Neural Networks using Spin Convolutions

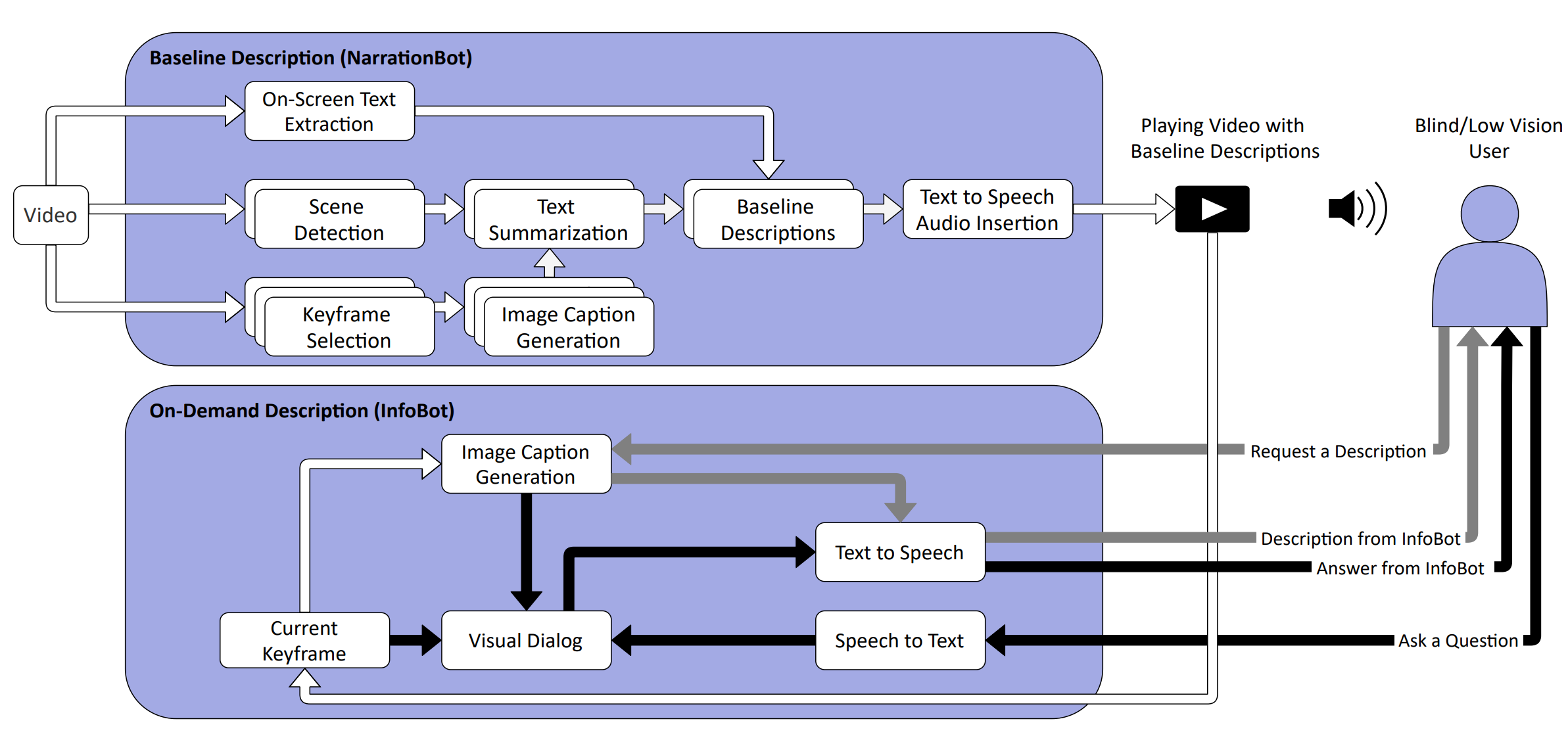

Automated Video Description for Blind and Low Vision Users

CHI EA 2021

Paper

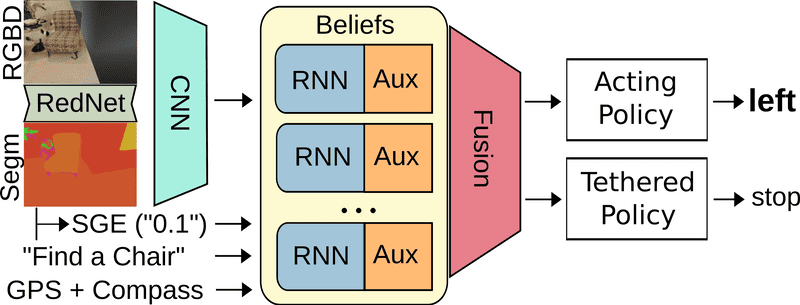

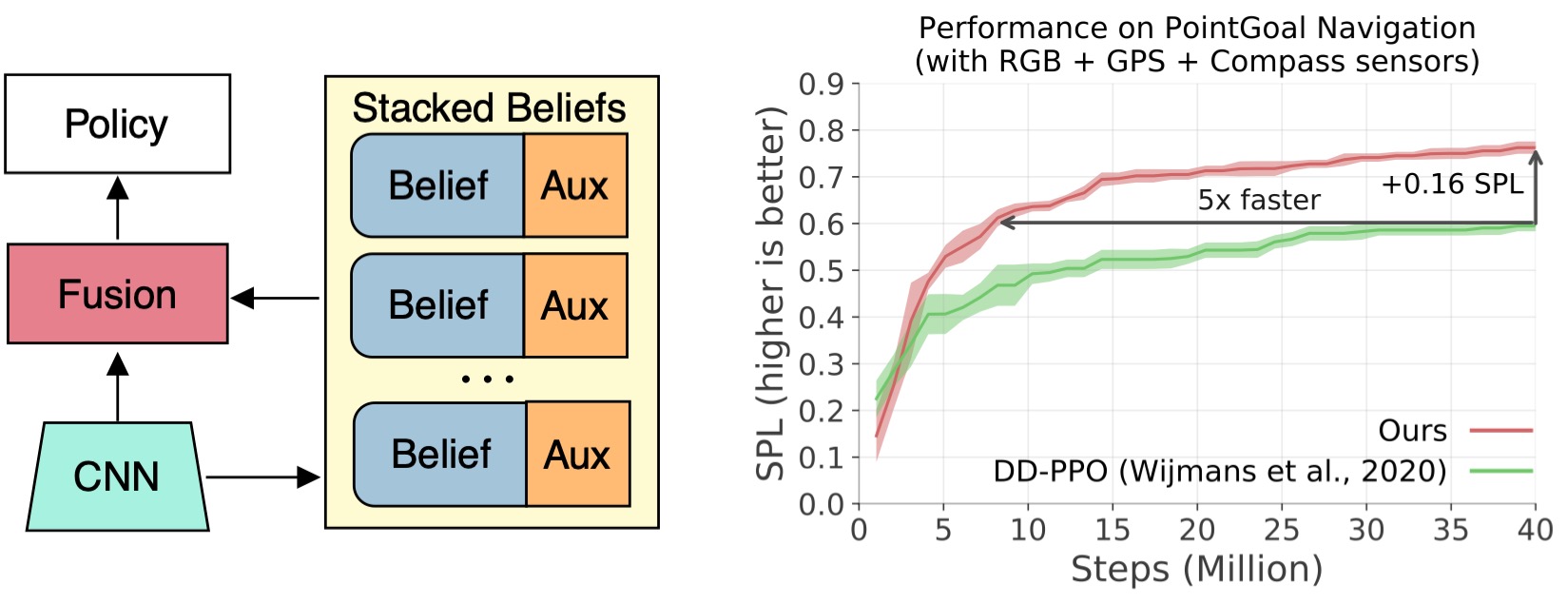

Auxiliary Tasks and Exploration Enable ObjectNav

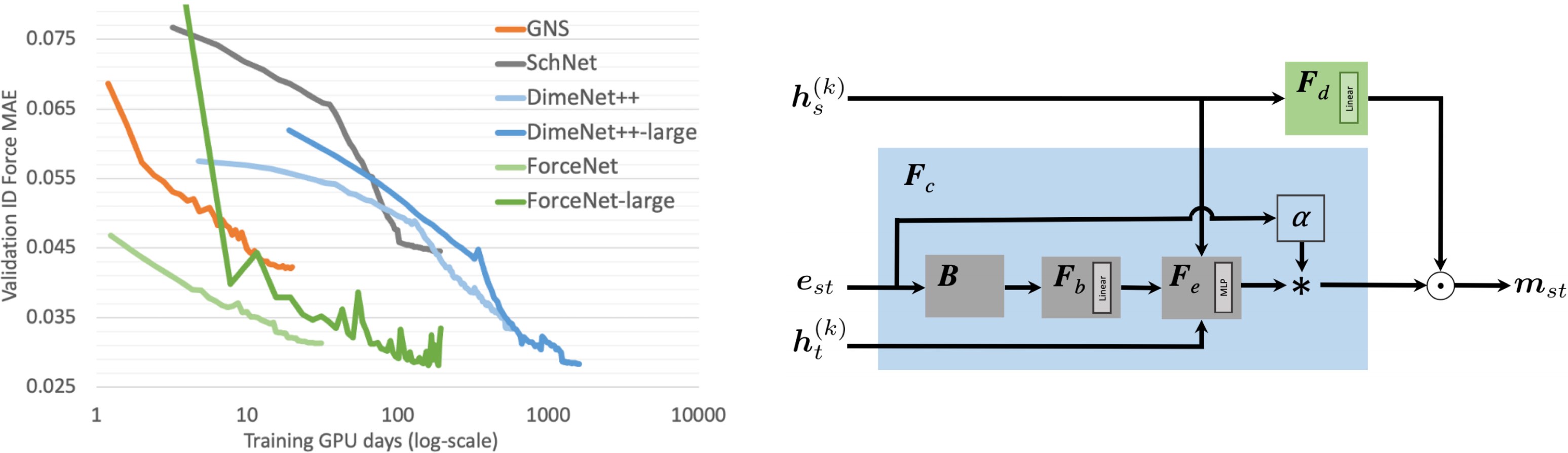

ForceNet: A Graph Neural Network for Large-Scale Quantum Calculations

ICLR 2021 Deep Learning for Simulation Workshop

Paper

opencatalystproject.org

Presentation video

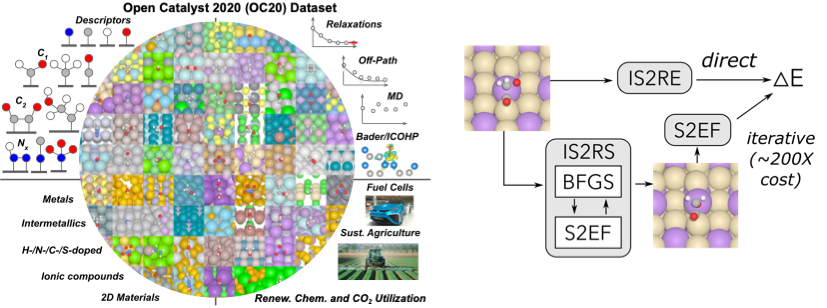

The Open Catalyst 2020 (OC20) Dataset and Community Challenges

ACS Catalysis 2021

Paper

Code

Dataset

opencatalystproject.org

An Introduction to Electrocatalyst Design using Machine Learning for Renewable Energy Storage

Auxiliary Tasks Speed Up Learning PointGoal Navigation

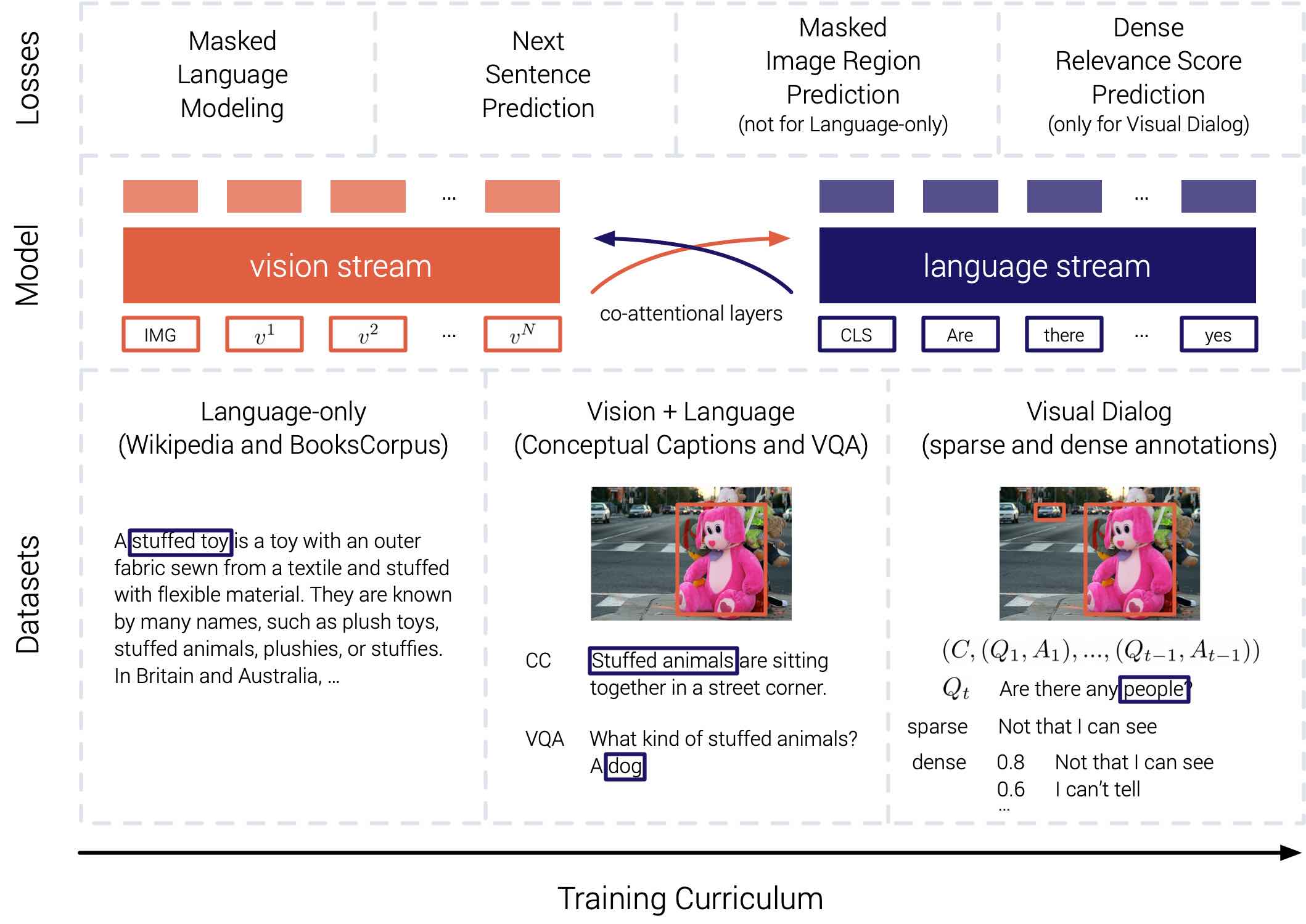

Large-scale Pretraining for Visual Dialog: A Simple State-of-the-Art Baseline

Building agents that can see, talk, and act

PhD Thesis

AAAI/ACM SIGAI Doctoral Dissertation Award, Runner-up

Georgia Tech Sigma Xi Best PhD Thesis Award

Georgia Tech College of Computing Dissertation Award

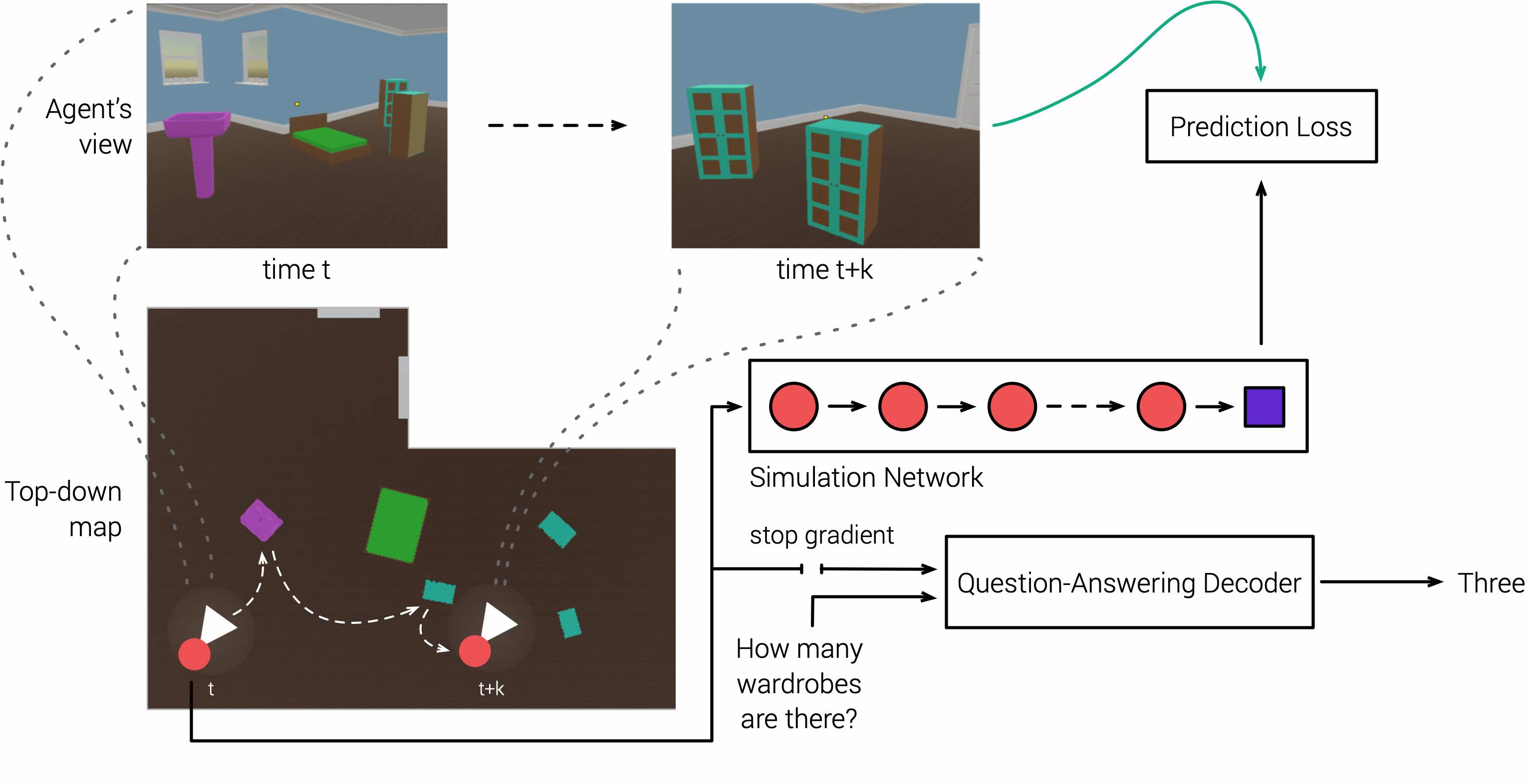

Probing Emergent Semantics in Predictive Agents via Question Answering

ICML 2020

Paper

Presentation video

Slides

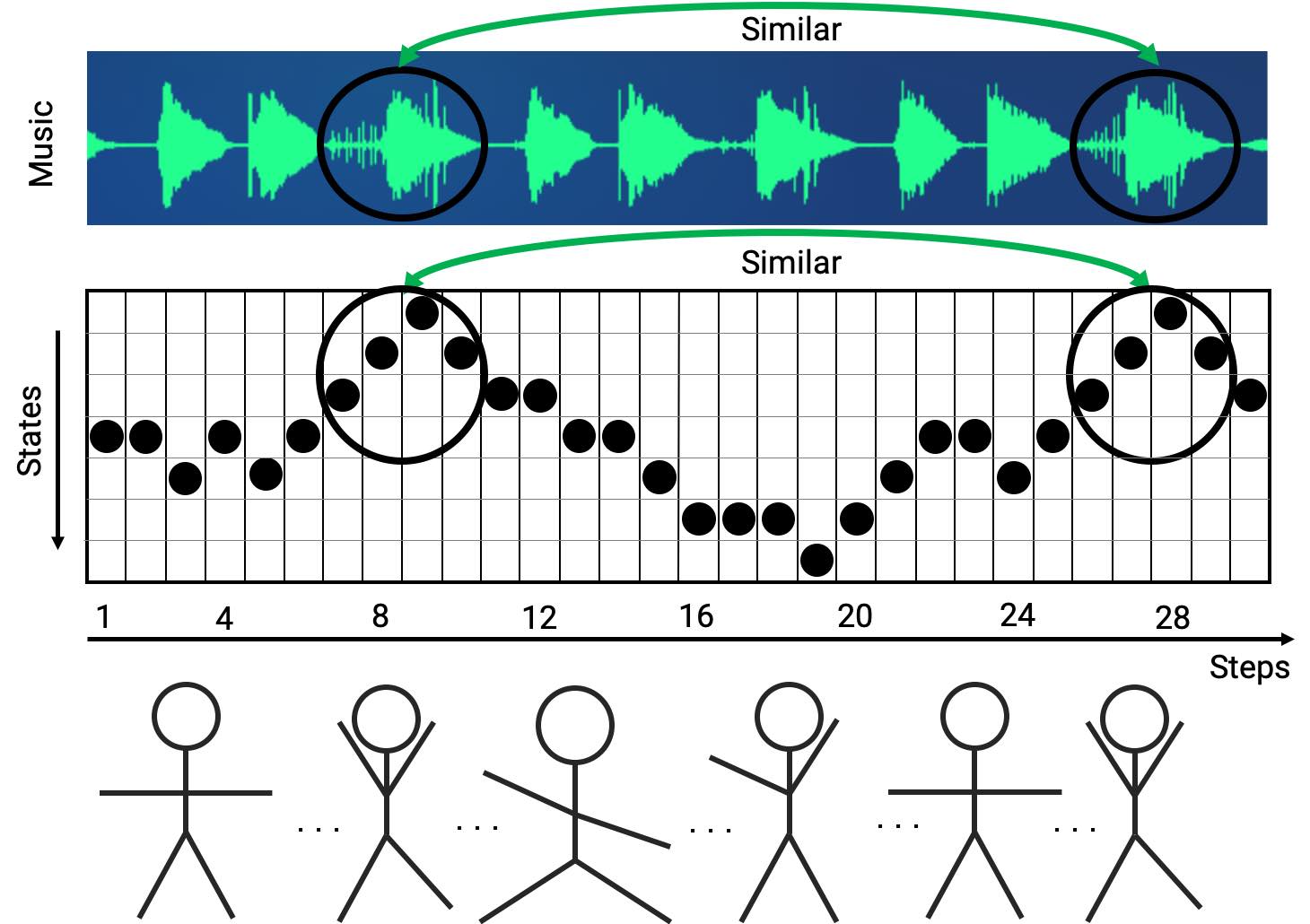

Feel The Music: Automatically Generating A Dance For An Input Song

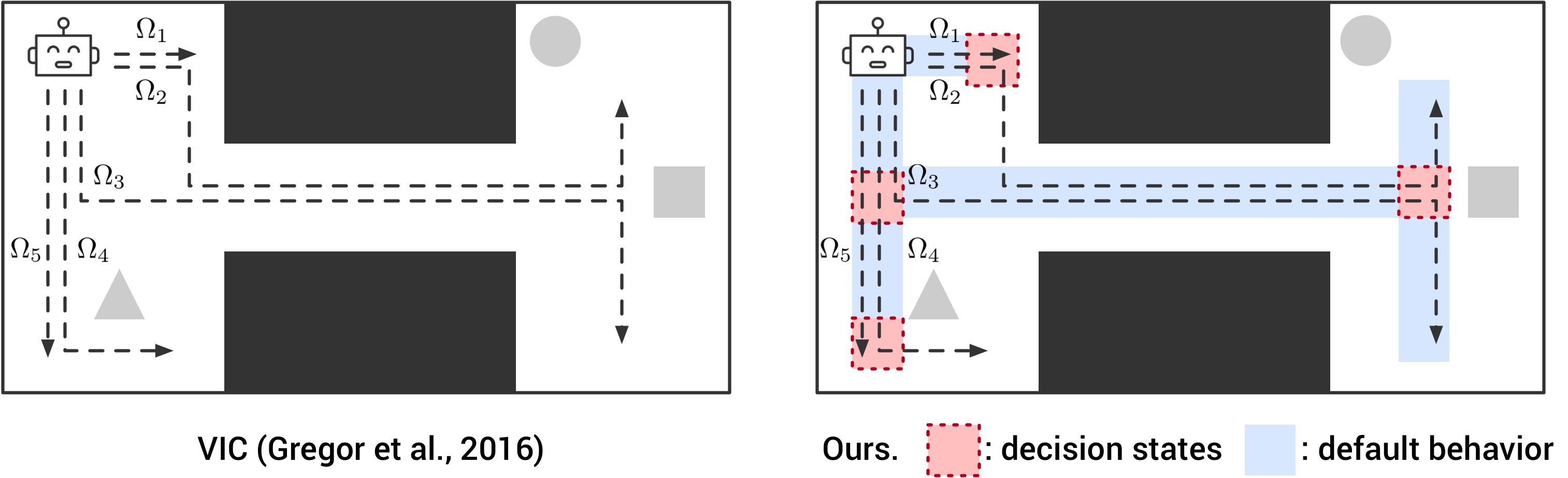

IR-VIC: Unsupervised Discovery of Sub-goals for Transfer in RL

IJCAI-PRICAI 2020, ICLR 2019 Task-Agnostic RL Workshop

Paper

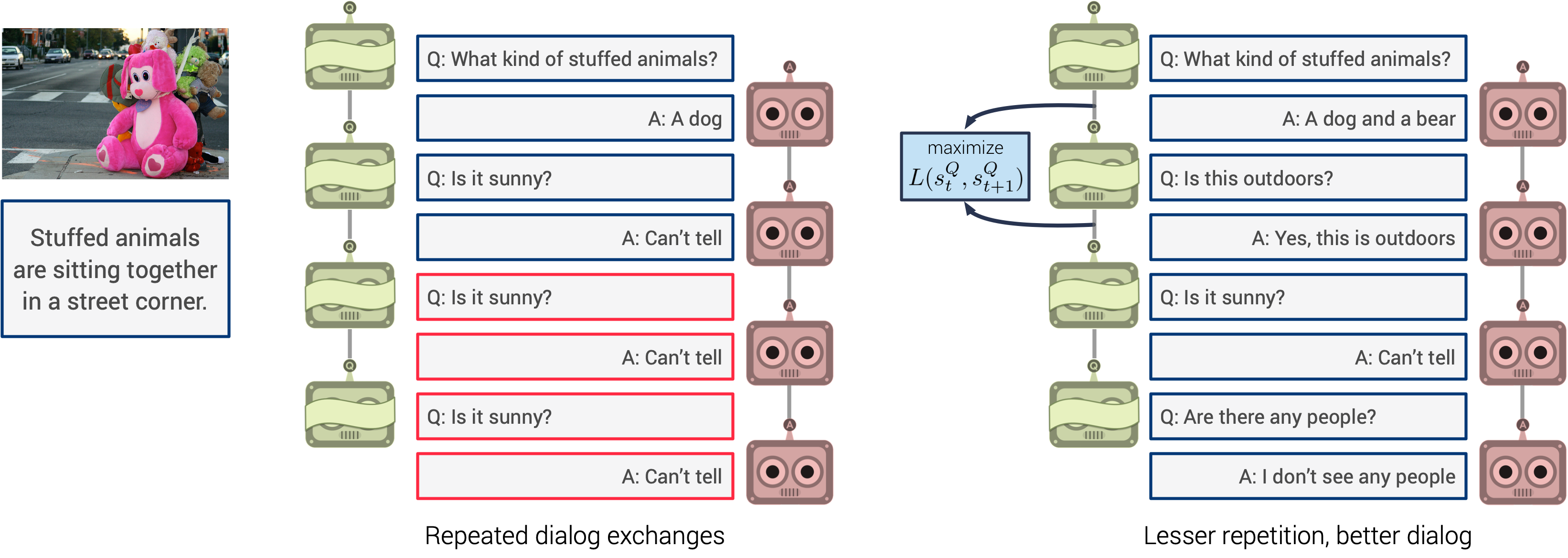

Improving Generative Visual Dialog by Answering Diverse Questions

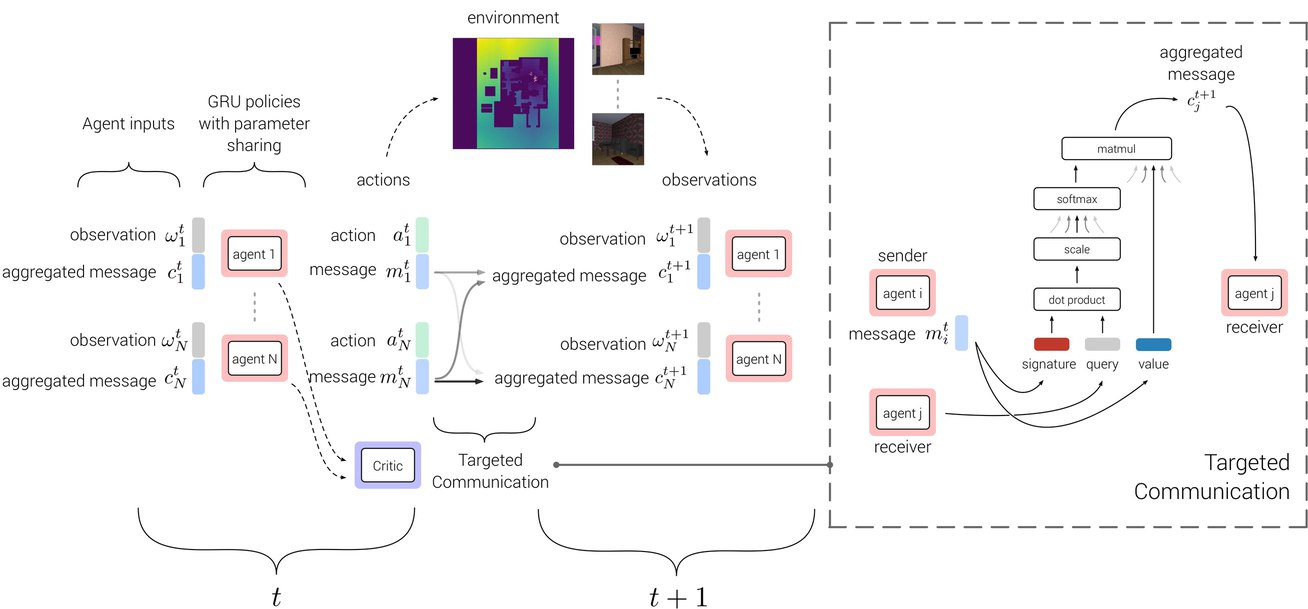

TarMAC: Targeted Multi-Agent Communication

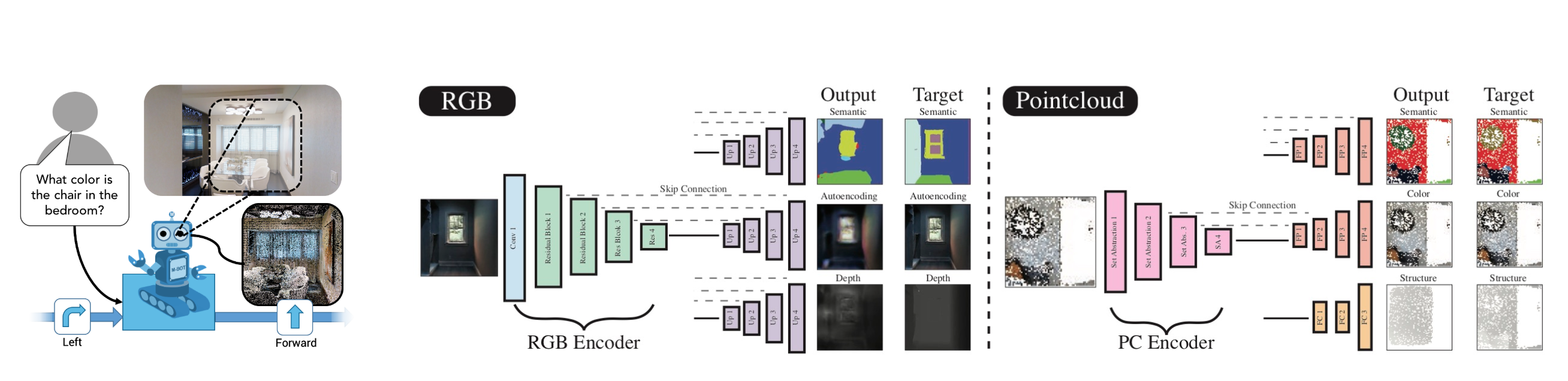

Embodied Question Answering in Photorealistic Environments with Point Clouds

CVPR 2019 (Oral)

Paper

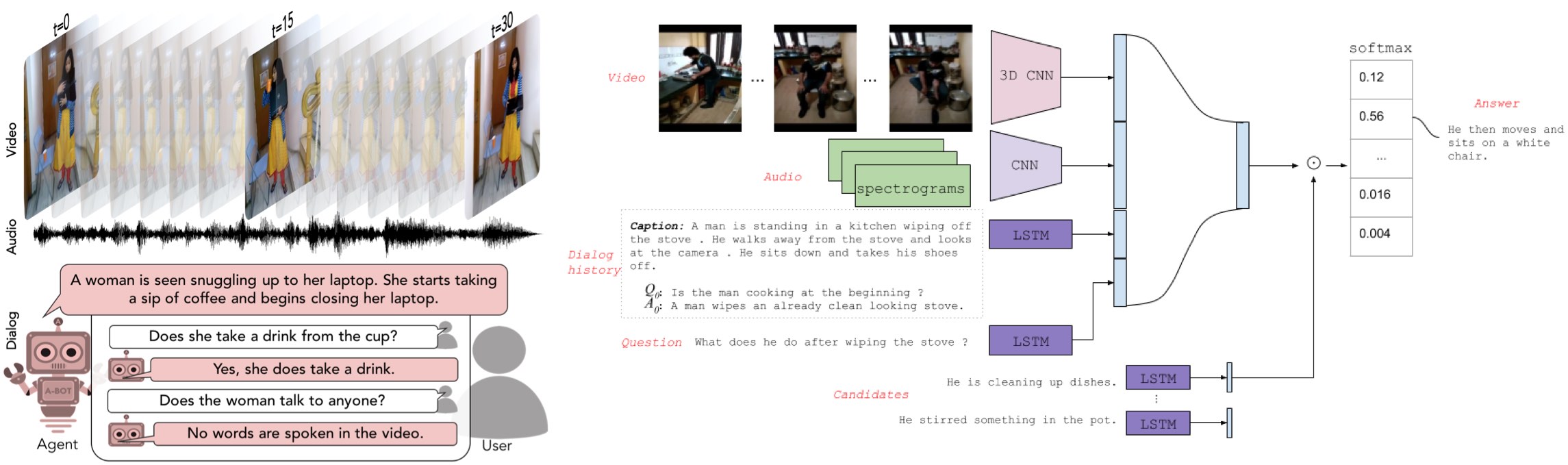

Audio-Visual Scene-Aware Dialog

CVPR 2019

Paper

Code

video-dialog.com

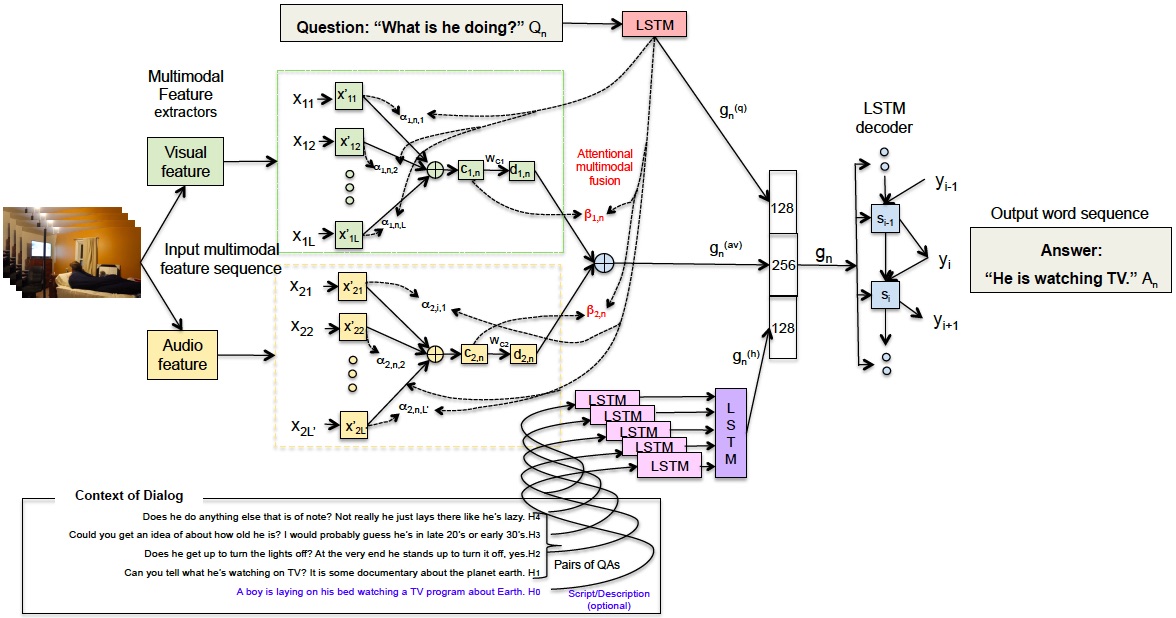

End-to-end Audio Visual Scene-Aware Dialog Using Multimodal Attention-based Video Features

ICASSP 2019

Paper

video-dialog.com

Neural Modular Control for Embodied Question Answering

CoRL 2018 (Spotlight)

Paper

embodiedqa.org

Presentation video

Slides

Embodied Question Answering

CVPR 2018 (Oral)

Paper

embodiedqa.org

Code

Presentation video

Slides

Evaluating Visual Conversational Agents via Cooperative Human-AI Games

Learning Cooperative Visual Dialog Agents with Deep Reinforcement Learning

ICCV 2017 (Oral)

Paper

Code

Presentation video

Slides

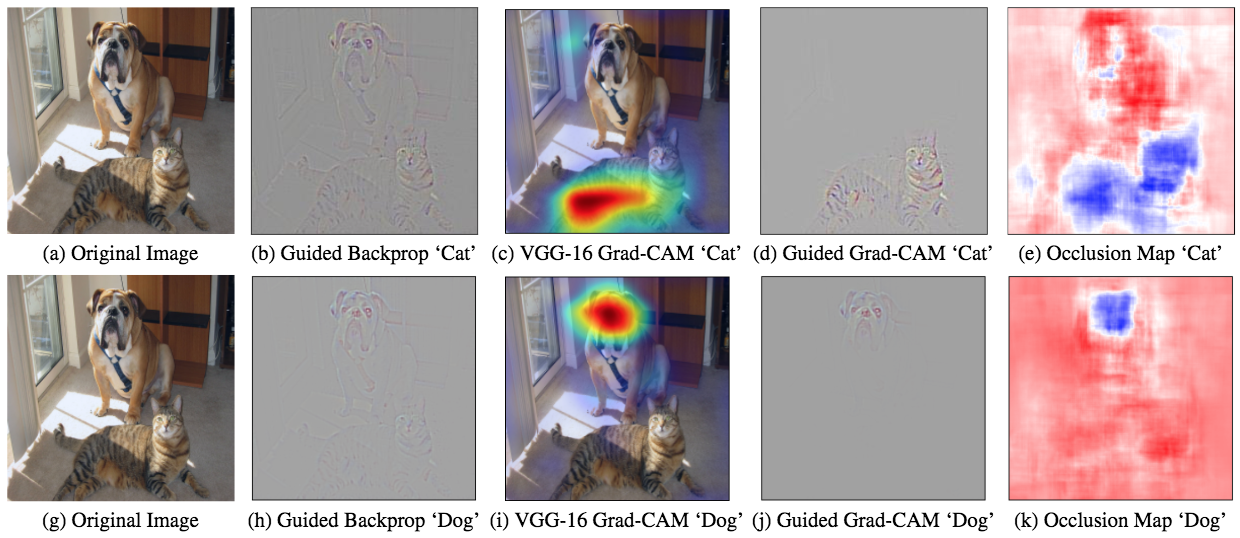

Grad-CAM: Visual Explanations from Deep Networks via Gradient-based Localization

IJCV 2019, ICCV 2017, NIPS 2016 Interpretable ML for Complex Systems Workshop

Paper

Code

Demo

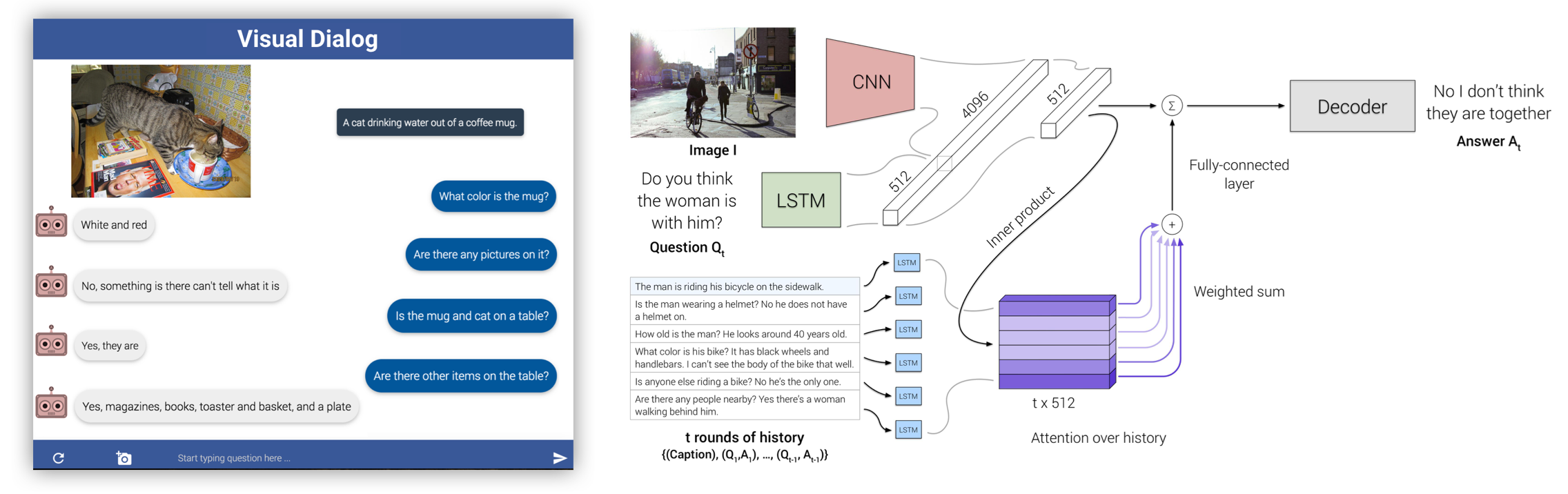

Visual Dialog

PAMI 2018, CVPR 2017 (Spotlight)

Paper

Code

visualdialog.org

AMT chat interface

Demo

Presentation video

Slides

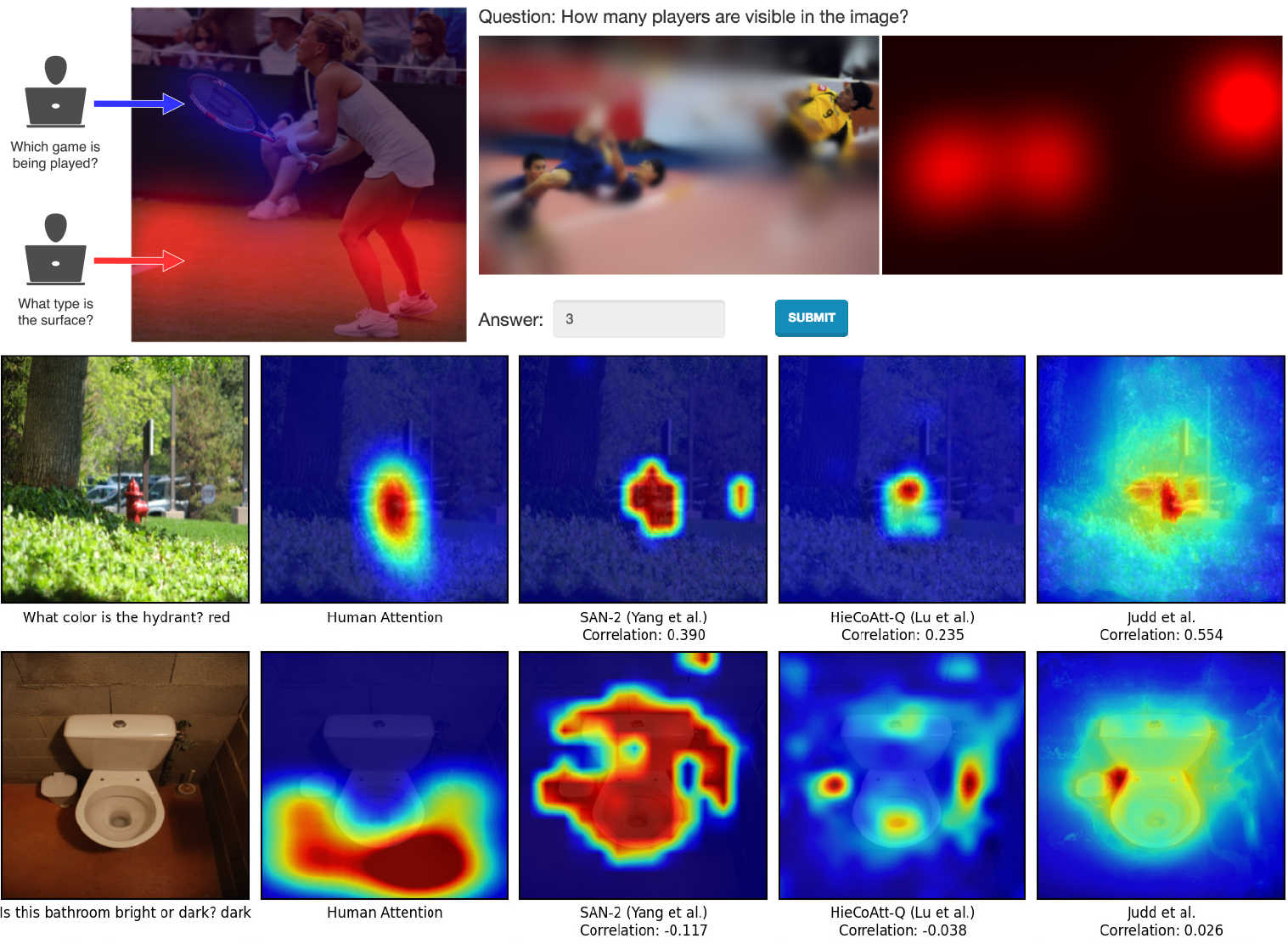

Human Attention in Visual Question Answering: Do Humans and Deep Networks Look at the Same Regions?

CVIU 2017, EMNLP 2016, ICML 2016 Workshop on Visualization for Deep Learning

Paper

Project+Dataset

neural-vqa-attention

Talks

Side projects

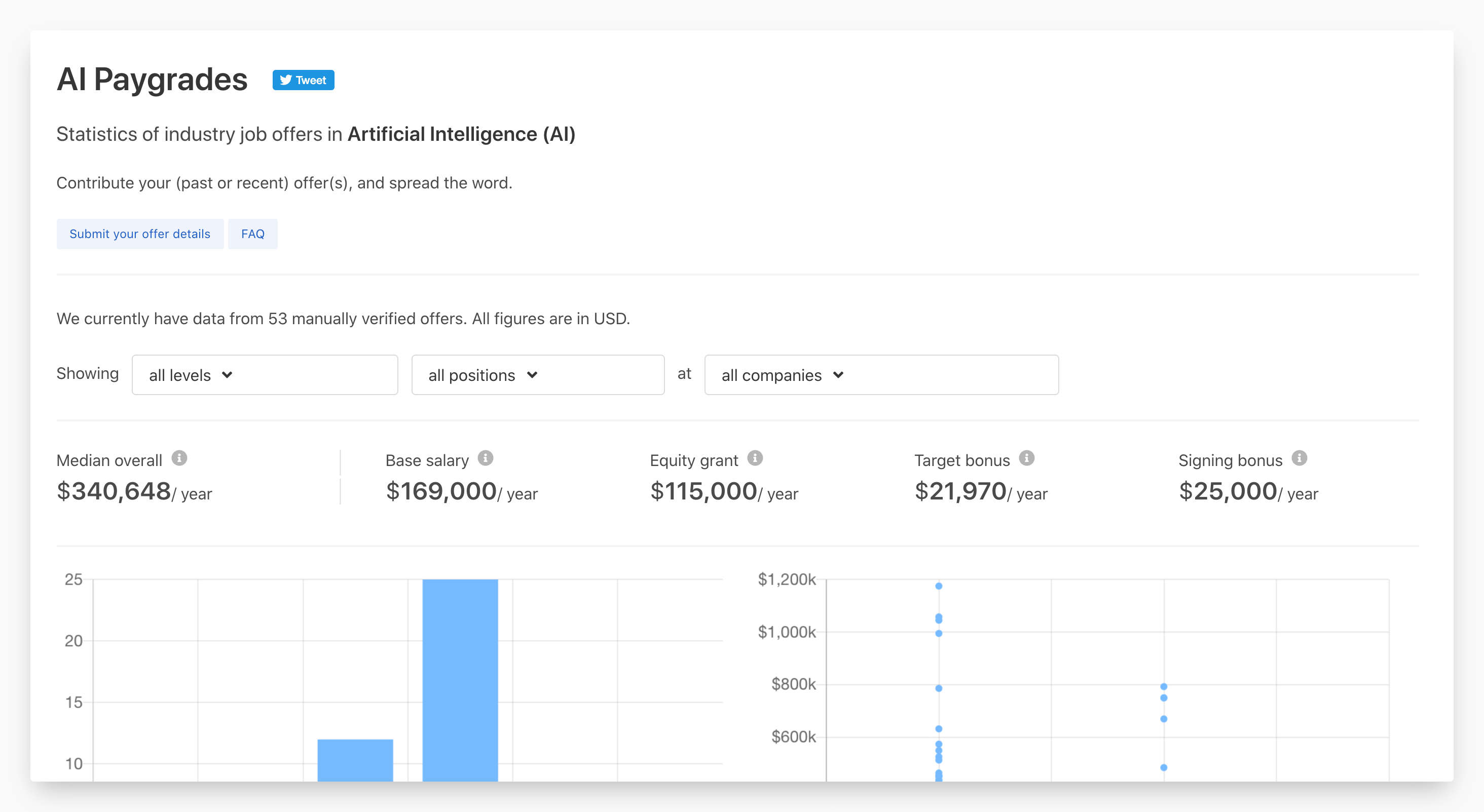

aipaygrad.es

aipaygrad.es provides statistics of industry job offers in Artificial Intelligence (AI).

All data is anonymous, cross-verified against offer letters and will

hopefully reduce information asymmetry.

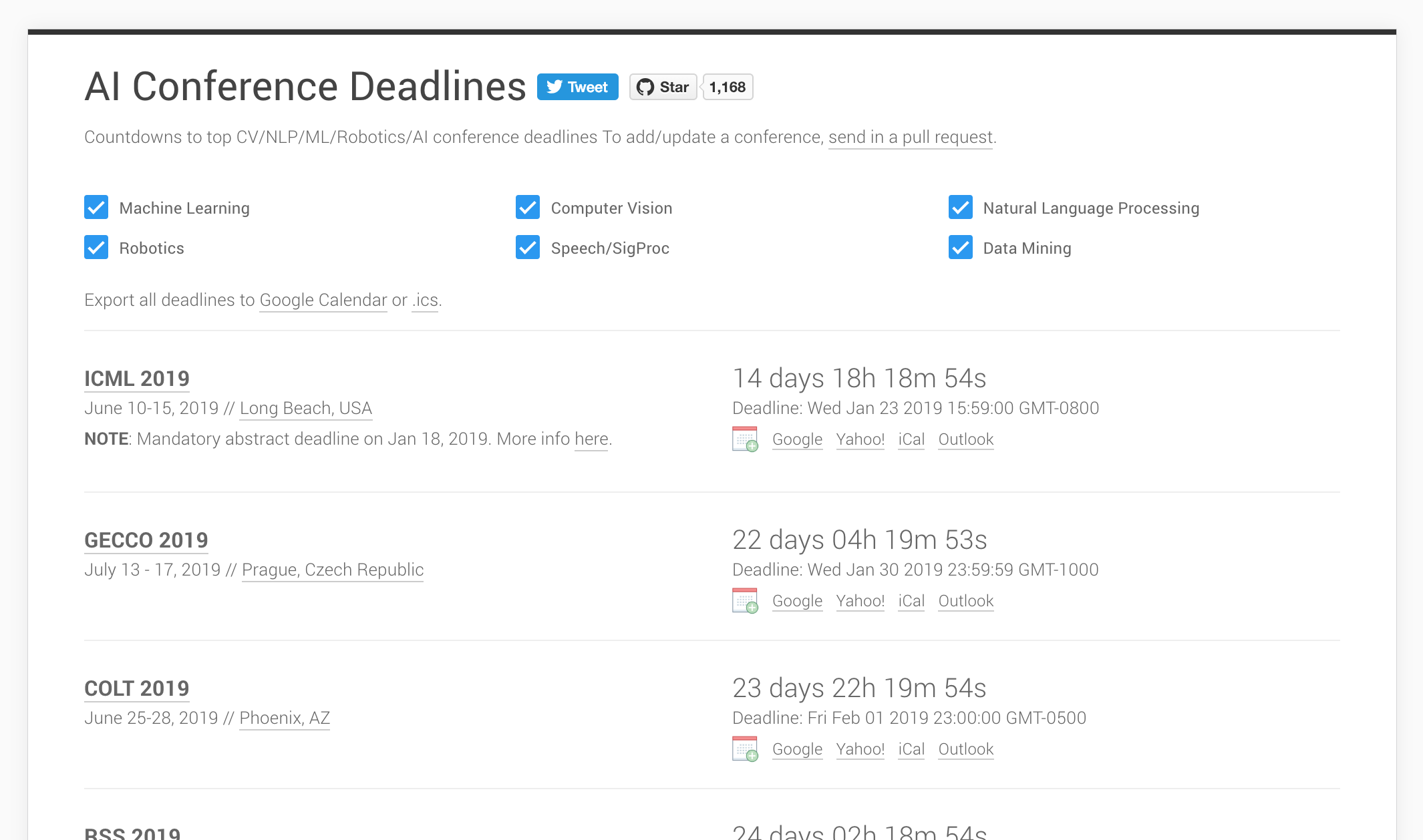

aideadlin.es

aideadlin.es is a webpage to keep track of CV/NLP/ML/AI conference deadlines. It's hosted on GitHub, and countdowns are automatically updated via pull requests to the data file in the repo.

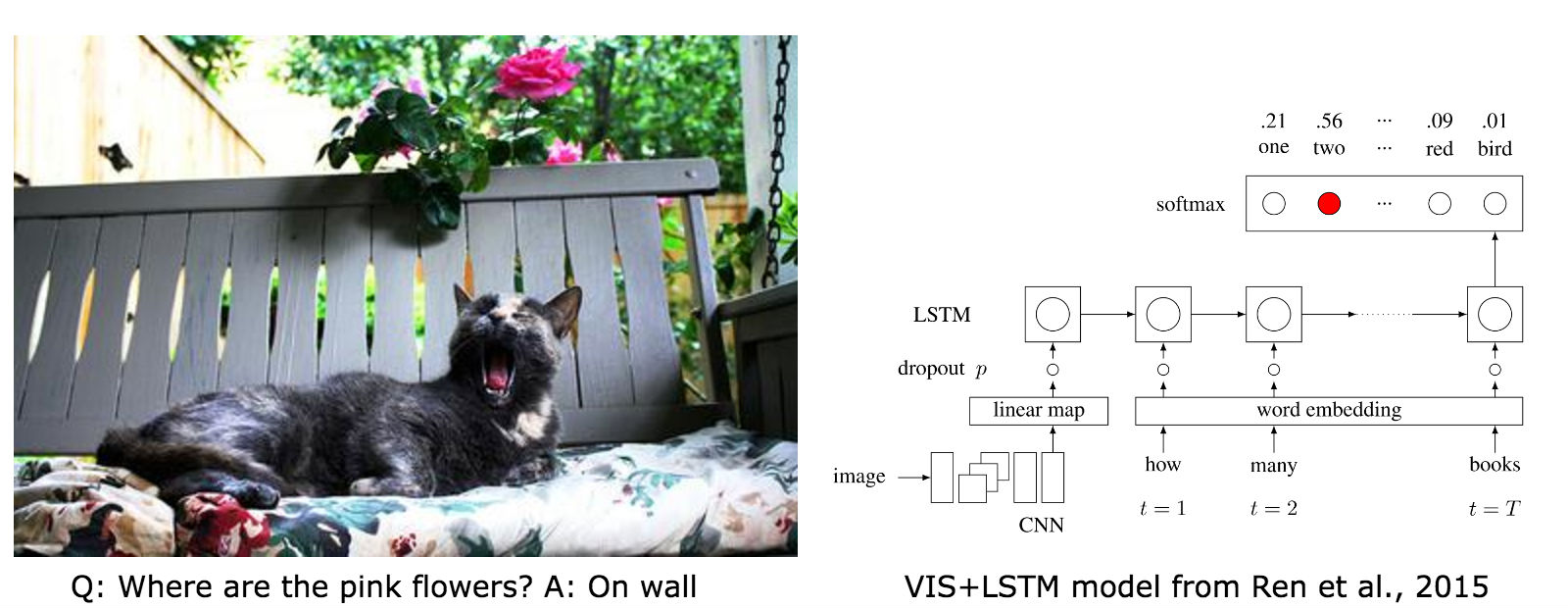

neural-vqa-attention

Torch implementation of an attention-based visual question answering model (Yang et al., CVPR16).

The model looks at an image, reads a question, and comes up with an answer to the question and a heatmap of where it looked in the image to answer it.

Some results here.

neural-vqa

neural-vqa is an efficient, GPU-based Torch implementation of the visual question answering model from the NIPS 2015 paper 'Exploring Models and Data for Image Question Answering' by Ren et al.

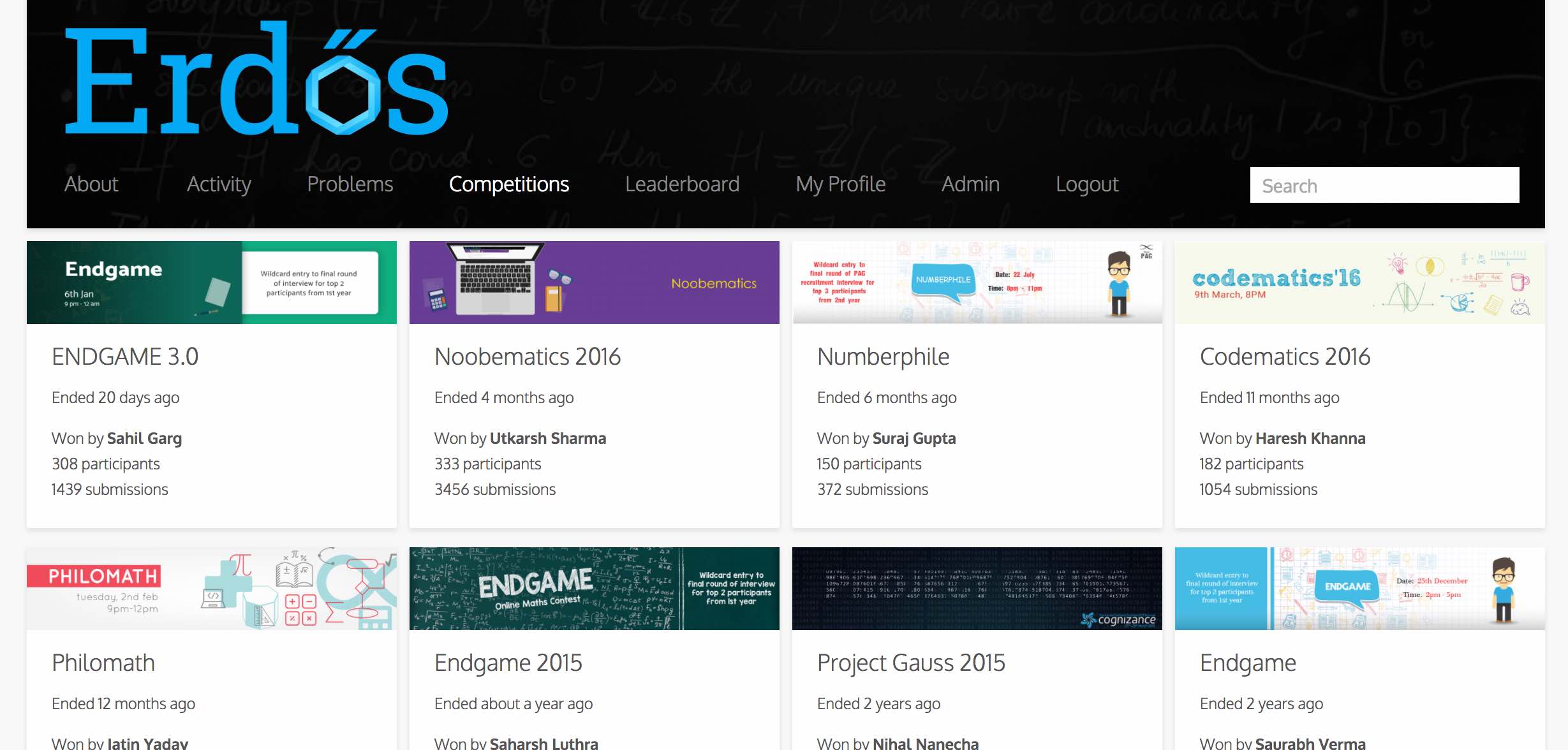

Erdős

Erdős by SDSLabs is a competitive math learning platform, similar in spirit to Project Euler, albeit more feature-packed (support for holding competitions, has a social layer) and prettier.

graf

graf plots pretty git contribution bar graphs in the terminal.

gem install graf to install.

HackFlowy

Clone of WorkFlowy.com, a beautiful, list-based note-taking website that has a 500-item monthly limit on the free tier :-(. This project is an open-source clone of WorkFlowy. "Make lists. Not war." :-)

AirMaps

AirMaps was a fun hackathon project that lets users navigate through Google Earth with gestures and speech commands using a Kinect sensor. It was the winning entry in Microsoft Code.Fun.Do.

HackView

Another fun hackathon-winning project built during Yahoo! HackU! 2012 that involves webRTC-based P2P video chat, and was faster than any other video chat provider (at the time, before Google launched Hangouts).

8tracks-downloader

Ugly-looking, but super-effective bash script for downloading entire playlists from 8tracks. (Still works as of 10/2016).